I am creating an HTTP Live Streaming Client for Mac that will control video playback on a large screen. My goal is to have a control UI on the main screen, and full screen video on the secondary screen.

Using AVFoundation, I have successfully been able to open the stream and control all aspects of it from my control UI, and I am now attempting to duplicate the video on a secondary screen. This is proving more difficult than I imagined...

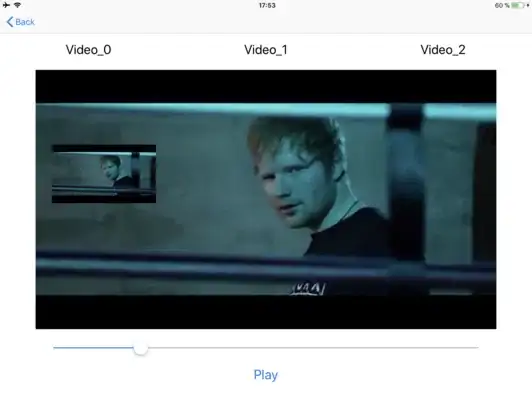

On the control screen, I have an AVPlayerLayer that is displaying the video content from an AVPlayer. My goal was to create another AVPlayerLayer, and send it the same player so that both players are playing the same video at the same time in two different views. However, that is not working.

Digging deeper, I found this in the AVFoundation docs:

You can create arbitrary numbers of player layers with the same AVPlayer object. Only the most-recently-created player layer will actually display the video content on-screen.

This is actually useless to me, because I need the video showing correctly in both views.

I can create a new instance of AVPlayerItem from the same AVAsset, then create a new AVPlayer and add it to a new AVPlayerLayer and have video show up, but they are no longer in sync because they are two different players generating two different audio streams playing different parts of the same stream.

Does anyone have any suggestions on how to get the same AVPlayer content into two different views? Perhaps some sort of CALayer mirroring trick?