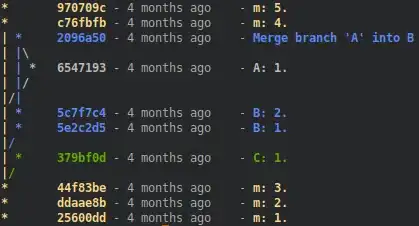

End user can copy tables from a pdf like

, paste the text in openai playground

bird_id bird_posts bird_likes

012 2 5

013 0 4

056 57 70

612 0 12

and will prompt the gpt with "Create table with the given text"

and gpt generates a table like below:

This works well as expected. But when my input text is sizeable (say 1076 tokens), I face the following error:

Token limit error: The input tokens exceeded the maximum allowed by the model. Please reduce the number of input tokens to continue. Refer to the token count in the 'Parameters' panel for more details.

I will use python for text preprocessing and will get the data from UI. If my input is textual data (like passages), I can use the approaches suggested by Langchain. But, I would not be able to use summarization iteratively with tabular text as I might loose rows/columns.

Any inputs how this can be handled?