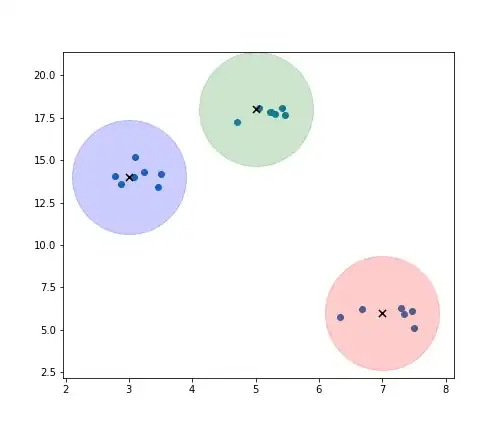

I am trying to find angles of a stockpile (on the left and right sides) by using Otsu threshold to segment the image. The image I have is like this:

In the code, I segment it and find the first black pixel in the image

The segmented photo doesn't seem to have any black pixels in the white background, but then it detects some black pixels even though I have used the morphology.opening

If I use it with different image, it doesn't seem to have this problem

How do I fix this problem? Any ideas? (The next step would be to find the angle on the left and right hand side)

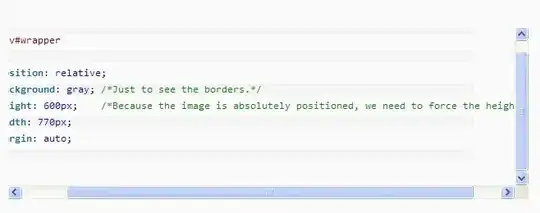

The code is attached here

from skimage import io, filters, morphology, measure

import numpy as np

import cv2 as cv

from scipy import ndimage

import math

# Load the image

image = io.imread('mountain3.jpg', as_gray=True)

# Apply Otsu's thresholding to segment the image

segmented_image = image > filters.threshold_otsu(image)

# Perform morphological closing to fill small gaps

structuring_element = morphology.square(1)

closed_image = morphology.closing(segmented_image, structuring_element)

# Apply morphological opening to remove small black regions in the white background

structuring_element = morphology.disk(10) # Adjust the disk size as needed

opened_image = morphology.opening(closed_image, structuring_element)

# Fill larger gaps using binary_fill_holes

#filled_image = measure.label(opened_image)

#filled_image = filled_image > 0

# Display the segmented image after filling the gaps

io.imshow(opened_image)

io.show()

# Find the first row containing black pixels

first_black_row = None

for row in range(opened_image.shape[0]):

if np.any(opened_image[row, :] == False):

first_black_row = row

break

if first_black_row is not None:

edge_points = [] # List to store the edge points

# Iterate over the rows below the first black row

for row in range(first_black_row, opened_image.shape[0]):

black_pixel_indices = np.where(opened_image[row, :] == False)[0]

if len(black_pixel_indices) > 0:

# Store the first black pixel coordinates on the left and right sides

left_x = black_pixel_indices[0]

right_x = black_pixel_indices[-1]

y = row

# Append the edge point coordinates

edge_points.append((left_x, y))

edge_points.append((right_x, y))

if len(edge_points) > 0:

# Plotting the edge points

import matplotlib.pyplot as plt

edge_points = np.array(edge_points)

plt.figure()

plt.imshow(opened_image, cmap='gray')

plt.scatter(edge_points[:, 0], edge_points[:, 1], color='red', s=1)

plt.title('Edge Points')

plt.show()

else:

print("No edge points found.")

else:

print("No black pixels found in the image.")