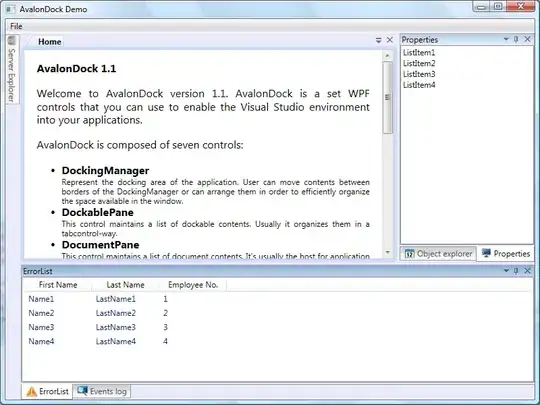

I am using terraform to implement a databricks job in Azure. I have a python wheel that I need to execute in this job. Following terraform Azure databricks documentation at this link I know how to implement a databricks notebook job. However, what I need is a databricks job of type "python wheel". All the examples provided in the mentioned link are to create databricks jobs of either type "notebook_task", or "spark_jar_task" or "pipeline_task". None of them is what I exactly need. If you look into databricks workspace however, you can see there is a specific job of type "python wheel". Below you can see this in the workspace:

Just to elaborate more, according to documentation I have already created a job. Following is my main.tf file:

resource "databricks_notebook" "this" {

path = "/Users/myusername/${var.notebook_subdirectory}/${var.notebook_filename}"

language = var.notebook_language

source = "./${var.notebook_filename}"

}

resource "databricks_job" "sample-tf-job" {

name = var.job_name

existing_cluster_id = "0342-285291-x0vbdshv" ## databricks_cluster.this.cluster_id

notebook_task {

notebook_path = databricks_notebook.this.path

}

}

As I said, this job is of type "Notebook" which is also in screen shot. The job I need is of type "Python wheel".

I am pretty sure terraform has already provided the capability to create "Python wheel" jobs as by looking at the source code in terraform provider for databricks I can see at currently line 49 python wheel task is defined. However, it is not clear to me how to call it in my code. Below is the source code I am referring to:

// PythonWheelTask contains the information for python wheel jobs

type PythonWheelTask struct {

EntryPoint string `json:"entry_point,omitempty"`

PackageName string `json:"package_name,omitempty"`

Parameters []string `json:"parameters,omitempty"`

NamedParameters map[string]string `json:"named_parameters,omitempty"`

}