I am trying to fit a 4 parameter logistic regression to a set of data points in python with scipy.curve_fit. However, the fit is quite bad, see below:

import matplotlib.pyplot as plt

import seaborn as sns

import numpy as np

from scipy.optimize import curve_fit

def fit_4pl(x, a, b, c, d):

return a + (d-a)/(1+np.exp(-b*(x-c)))

x=np.array([2000. , 1000. , 500. , 250. , 125. , 62.5, 2000. , 1000. ,

500. , 250. , 125. , 62.5])

y=np.array([1.2935, 0.9735, 0.7274, 0.3613, 0.1906, 0.104 , 1.3964, 0.9751,

0.6589, 0.353 , 0.1568, 0.0909])

#initial guess

p0 = [y.min(), 0.5, np.median(x), y.max()]

#fit 4pl

p_opt, cov_p = curve_fit(fit_4pl, x, y, p0=p0, method='dogbox')

#get optimized model

a_opt, b_opt, c_opt, d_opt = p_opt

x_model = np.linspace(min(x), max(x), len(x))

y_model = fit_4pl(x_model, a_opt, b_opt, c_opt, d_opt)

#plot

plt.figure()

sns.scatterplot(x=x, y=y, label="measured data")

plt.title(f"Calibration curve of {i}")

sns.lineplot(x=x_model, y=y_model, label='fit')

plt.legend(loc='best')

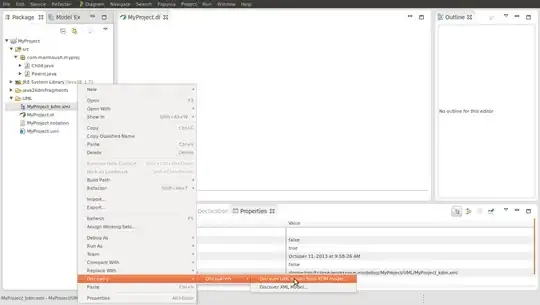

This gives me the following parameters and graph:

[2.46783333e-01 5.00000000e-01 3.75000000e+02 1.14953333e+00]

Graphh of 4PL fit overlaid with measured data

This fit is clearly terrible, but I do not know how to improve this. Please help.

I've tried different initial guesses. This has resulted in either the fit shown above or no fit at all (either warnings.warn('Covariance of the parameters could not be estimated' error or Optimal parameters not found: Number of calls to function has reached maxfev = 1000)

I looked at this similar question and the graph produced in the accepted solution is what I'm aiming for. I have attempted to manually assign some bounds but I do not know what I'm doing and there was no discernible improvement.