I am trying to connect to a databricks cluster and install a local python whl using DatabricksSubmitRunOperator on Airflow (v2.3.2) with following configuration. However, it doesn't work and throws a fileNotFound exception (I checked file path multiple times, file exists).

task1 = DatabricksSubmitRunOperator(

task_id = <task_id>,

job_name = <job_name>,

existing_cluster_id = <cluster_id>,

libraries=[

{"whl": "file:/<local_absolute_path>"}

]

)

While the official documentation states that, for .whl files, only DBFS and S3 storage is supported, in Airflow, I see the following error message when prefix file:/ is not attached:

Library installation failed for library due to user error.

Error messages: Python wheels must be stored in dbfs, s3, adls, gs or as a local file. Make sure the URI begins with 'dbfs:', 'file:', 's3:', 'abfss:', 'gs:'

Is it possible install local .whl files on a databricks cluster?

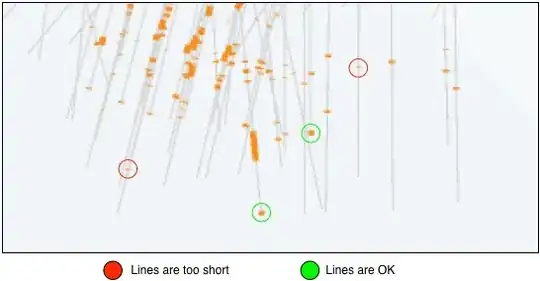

Alternative approach I tried is to copy .whl to dbfs storage and install it from there. The problem with that is that installation status is stuck at "pending".

Any help is appreciated.