Situation

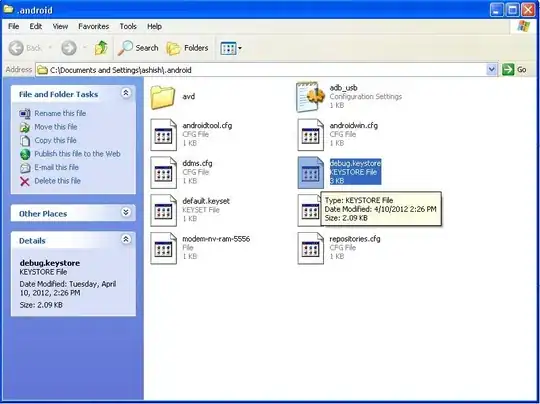

Following the Camera Calibration tutorial in OpenCV I managed to get an undistorted image of a checkboard using cv.calibrateCamera:

Original image: (named image.tif in my computer)

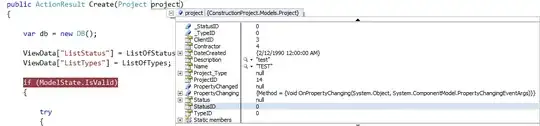

Code:

import numpy as np

import cv2 as cv

import matplotlib.pyplot as plt

# termination criteria

criteria = (cv.TERM_CRITERIA_EPS + cv.TERM_CRITERIA_MAX_ITER, 30, 0.001)

# prepare object points, like (0,0,0), (1,0,0), (2,0,0) ....,(6,5,0)

objp = np.zeros((12*13,3), np.float32)

objp[:,:2] = np.mgrid[0:12,0:13].T.reshape(-1,2)

# Arrays to store object points and image points from all the images.

objpoints = [] # 3d point in real world space

imgpoints = [] # 2d points in image plane.

img = cv.imread('image.tif')

gray = cv.cvtColor(img, cv.COLOR_BGR2GRAY)

# Find the chess board corners

ret, corners = cv.findChessboardCorners(gray, (12,13), None)

# If found, add object points, image points (after refining them)

if ret == True:

objpoints.append(objp)

corners2 = cv.cornerSubPix(gray,corners, (11,11), (-1,-1), criteria)

imgpoints.append(corners)

# Draw and display the corners

cv.drawChessboardCorners(img, (12,13), corners2, ret)

cv.imshow('img', img)

cv.waitKey(2000)

cv.destroyAllWindows()

ret, mtx, dist, rvecs, tvecs = cv.calibrateCamera(objpoints, imgpoints, gray.shape[::-1], None, None)

#Plot undistorted

h, w = img.shape[:2]

newcameramtx, roi = cv.getOptimalNewCameraMatrix(mtx, dist, (w,h), 1, (w,h))

dst = cv.undistort(img, mtx, dist, None, newcameramtx)

# crop the image

x, y, w, h = roi

dst = dst[y:y+h, x:x+w]

plt.figure()

plt.imshow(dst)

plt.savefig("undistorted.png", dpi = 300)

plt.close()

Undistorted image:

The undistorted image indeed has straight lines. However, in order to test the calibration procedure I would like to further transform the image into real-world coordinates using the rvecs and tvecs outputs of cv.calibrateCamera. From the documentation:

rvecs: Output vector of rotation vectors (Rodrigues ) estimated for each pattern view (e.g. std::vector<cv::Mat>>). That is, each i-th rotation vector together with the corresponding i-th translation vector (see the next output parameter description) brings the calibration pattern from the object coordinate space (in which object points are specified) to the camera coordinate space. In more technical terms, the tuple of the i-th rotation and translation vector performs a change of basis from object coordinate space to camera coordinate space. Due to its duality, this tuple is equivalent to the position of the calibration pattern with respect to the camera coordinate space.

tvecs: Output vector of translation vectors estimated for each pattern view, see parameter describtion above.

Question: How can I manage this? It would be great if the answers include a working code that outputs the transformed image.

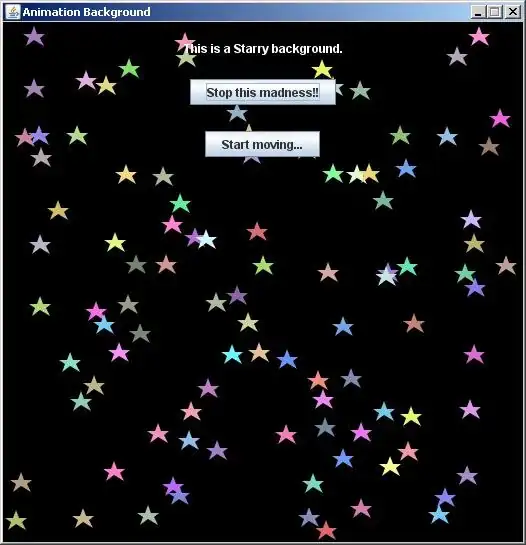

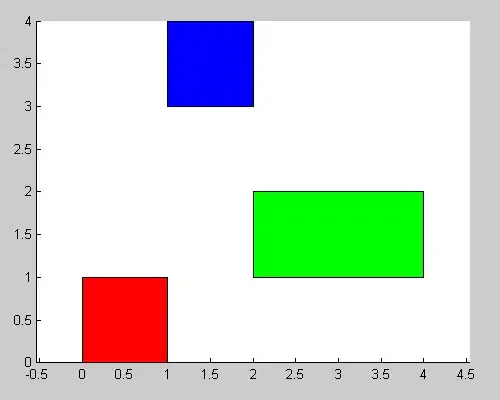

Expected output

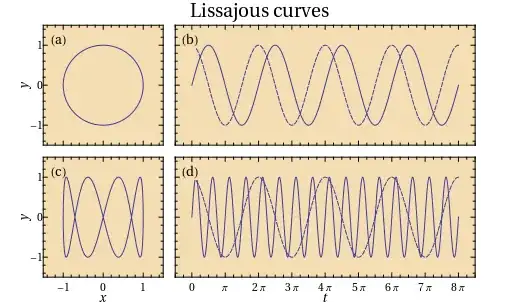

The image I expect should look something like this, where the red coordinates correspond to the real-world coordinates of the checkboard (notice the checkboard is a rectangle in this projection):

What I have tried

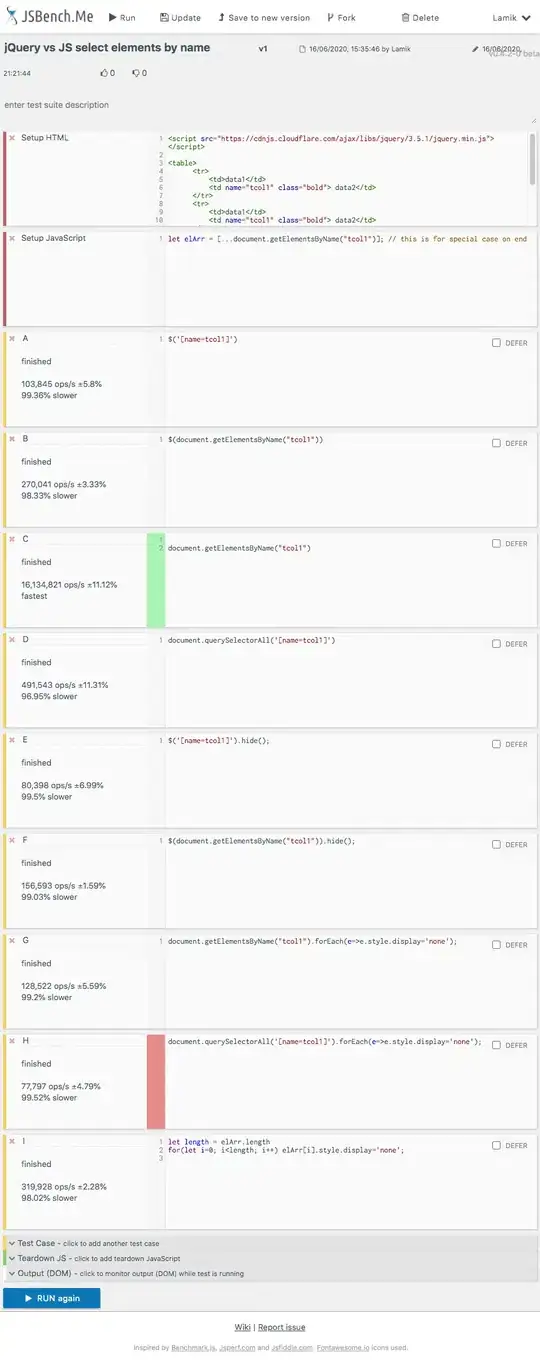

Following the comment of @Christoph Rackwitz, I found this post, where they explain the homography matrix H that relates the 3D real world coordinates (of the chessboard) to the 2D image coordinates is given by:

H = K [R1 R2 t]

where K is the camera calibration matrix, R1 and R2 are the first two columns of the rotational matrix and t is the translation vector.

I tried to calculate this from:

Kwe already have it as themtxfromcv.calibrateCamera.R1andR2fromrvecsafter converting it to a rotational matrix (because it is given in Rodrigues decomposition):cv.Rodrigues(rvecs[0])[0].tshould betvecs.

In order to calculate the homography from the image coordinates to the 3D real world coordinates then I use the inverse of H.

Finally I use cv.warpPerspective to display the projected image.

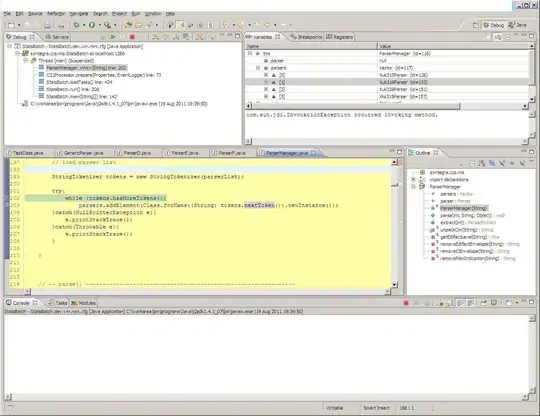

Code:

R = cv.Rodrigues(rvecs[0])[0]

tvec = tvecs[0].squeeze()

H = np.dot(mtx, np.concatenate((R[:,:2], tvec[:,None]), axis = 1) )/tvec[-1]

plt.imshow(cv.warpPerspective(dst, np.linalg.inv(H), (dst.shape[1], dst.shape[0])))

But this does not work, I find the following picture:

Any ideas where the problem is?

Related questions: