AFAIK when a container is deployed to Cloud Run it automatically listens possible requests to be sent. See document for reference.

Instead you can send a request to access the deployed container. You can do this by using the code below.

This DAG has three tasks print_token, task_get_op and process_data.

print_token prints the identity token needed to authenticate the requests to your deployed Cloud Run container. I used "xcom_pull" get the output of "BashOperator" and assign the authentication token to token so this could be used to authenticate to HTTP request that you will perform.task_get_op performs a GET on the connection cloud_run (this just contains the Cloud Run endpoint) and defined headers 'Authorization': 'Bearer ' + token for the authentication.process_data performs "xcom_pull" on "task_get_op" to get the output and print it using PythonOperator.

import datetime

import airflow

from airflow.operators import bash

from airflow.operators import python

from airflow.providers.http.operators.http import SimpleHttpOperator

YESTERDAY = datetime.datetime.now() - datetime.timedelta(days=1)

default_args = {

'owner': 'Composer Example',

'depends_on_past': False,

'email': [''],

'email_on_failure': False,

'email_on_retry': False,

'retries': 1,

'retry_delay': datetime.timedelta(minutes=5),

'start_date': YESTERDAY,

}

with airflow.DAG(

'composer_http_request',

'catchup=False',

default_args=default_args,

schedule_interval=datetime.timedelta(days=1)) as dag:

print_token = bash.BashOperator(

task_id='print_token',

bash_command='gcloud auth print-identity-token "--audiences=https://hello-world-fri824-ab.c.run.app"' # The end point of the deployed Cloud Run container

)

token = "{{ task_instance.xcom_pull(task_ids='print_token') }}" # gets output from 'print_token' task

task_get_op = SimpleHttpOperator(

task_id='get_op',

method='GET',

http_conn_id='cloud_run',

headers={'Authorization': 'Bearer ' + token },

)

def process_data_from_http(**kwargs):

ti = kwargs['ti']

http_data = ti.xcom_pull(task_ids='get_op')

print(http_data)

process_data = python.PythonOperator(

task_id='process_data_from_http',

python_callable=process_data_from_http,

provide_context=True

)

print_token >> task_get_op >> process_data

cloud_run connection:

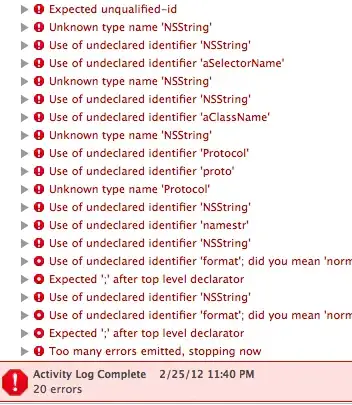

Output (graph):

print_token logs:

task_get_op logs:

process_data logs (output from GET):

NOTE: I'm using Cloud Composer 1.17.7 and Airflow 2.0.2 and installed apache-airflow-providers-http to be able to use the SimpleHttpOperator.