Im trying to copy the exrcise about halfway down the page on this link: https://d2l.ai/chapter_recurrent-neural-networks/sequence.html

The exercise uses a sine function to create 1000 data points between -1 through 1 and use a recurrent network to approximate the function.

Below is the code I used. I'm going back to study more why this isn't working as it doesn't make much sense to me now when I was easily able to use a feed forward network to approximate this function.

//get data

ArrayList<DataSet> list = new ArrayList();

DataSet dss = DataSetFetch.getDataSet(Constants.DataTypes.math, "sine", 20, 500, 0, 0);

DataSet dsMain = dss.copy();

if (!dss.isEmpty()){

list.add(dss);

}

if (list.isEmpty()){

return;

}

//format dataset

list = DataSetFormatter.formatReccurnent(list, 0);

//get network

int history = 10;

ArrayList<LayerDescription> ldlist = new ArrayList<>();

LayerDescription l = new LayerDescription(1,history, Activation.RELU);

ldlist.add(l);

LayerDescription ll = new LayerDescription(history, 1, Activation.IDENTITY, LossFunctions.LossFunction.MSE);

ldlist.add(ll);

ListenerDescription ld = new ListenerDescription(20, true, false);

MultiLayerNetwork network = Reccurent.getLstm(ldlist, 123, WeightInit.XAVIER, new RmsProp(), ld);

//train network

final List<DataSet> lister = list.get(0).asList();

DataSetIterator iter = new ListDataSetIterator<>(lister, 50);

network.fit(iter, 50);

network.rnnClearPreviousState();

//test network

ArrayList<DataSet> resList = new ArrayList<>();

DataSet result = new DataSet();

INDArray arr = Nd4j.zeros(lister.size()+1);

INDArray holder;

if (list.size() > 1){

//test on training data

System.err.println("oops");

}else{

//test on original or scaled data

for (int i = 0; i < lister.size(); i++) {

holder = network.rnnTimeStep(lister.get(i).getFeatures());

arr.putScalar(i,holder.getFloat(0));

}

}

//add originaldata

resList.add(dsMain);

//result

result.setFeatures(dsMain.getFeatures());

result.setLabels(arr);

resList.add(result);

//display

DisplayData.plot2DScatterGraph(resList);

Can you explain the code I would need for a 1 in 10 hidden and 1 out lstm network to approximate a sine function?

Im not using any normalization as function is already -1:1 and Im using the Y input as the feature and the following Y Input as the label to train the network.

You notice i am building a class that allows for easier construction of nets and I have tried throwing many changes at the problem but I am sick of guessing.

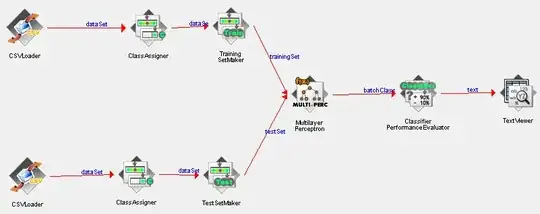

Here are some examples of my results. Blue is data red is result