I'm trying to replicate DQN scores for Breakout using RLLib. After 5M steps the average reward is 2.0 while the known score for Breakout using DQN is 100+. I'm wondering if this is because of reward clipping and therefore actual reward does not correspond to score from Atari. In OpenAI baselines, the actual score is placed in info['r'] the reward value is actually the clipped value. Is this the same case for RLLib? Is there any way to see actual average score while training?

- 63,284

- 17

- 238

- 185

1 Answers

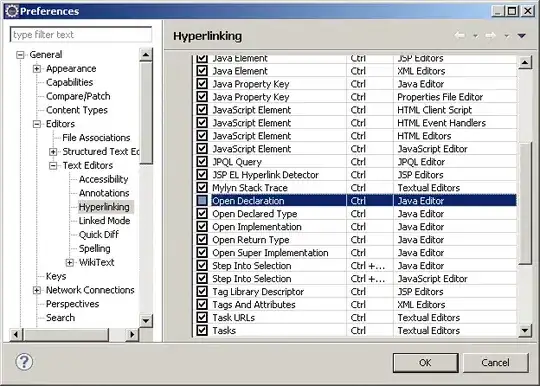

According to the list of trainer parameters, the library will clip Atari rewards by default:

# Whether to clip rewards prior to experience postprocessing. Setting to

# None means clip for Atari only.

"clip_rewards": None,

However, the episode_reward_mean reported on tensorboard should still correspond to the actual, non-clipped scores.

While the average score of 2 is not much at all relative to the benchmarks for Breakout, 5M steps may not be large enough for DQN unless you are employing something akin to the rainbow to significantly speed things up. Even then, DQN is notoriously slow to converge, so you may want to check your results using a longer run instead and/or consider upgrading your DQN configurations.

I've thrown together a quick test and it looks like the reward clipping doesn't have much of an effect on Breakout, at least early on in the training (unclipped in blue, clipped in orange):

I don't know too much about Breakout to comment on its scoring system, but if higher rewards become available later on as we get better performance (as opposed to getting the same small reward but with more frequency, say), we should start seeing the two diverge. In such cases, we can still normalize the rewards or convert them to logarithmic scale.

Here's the configurations I used:

lr: 0.00025

learning_starts: 50000

timesteps_per_iteration: 4

buffer_size: 1000000

train_batch_size: 32

target_network_update_freq: 10000

# (some) rainbow components

n_step: 10

noisy: True

# work-around to remove epsilon-greedy

schedule_max_timesteps: 1

exploration_final_eps: 0

prioritized_replay: True

prioritized_replay_alpha: 0.6

prioritized_replay_beta: 0.4

num_atoms: 51

double_q: False

dueling: False

You may be more interested in their rl-experiments where they posted some results from their own library against the standard benchmarks along with the configurations where you should be able to get even better performance.

- 119

- 1

- 9