I am trying to implement my own loss function for binary classification. To get started, I want to reproduce the exact behavior of the binary objective. In particular, I want that:

- The loss of both functions have the same scale

- The training and validation slope is similar

- predict_proba(X) returns probabilities

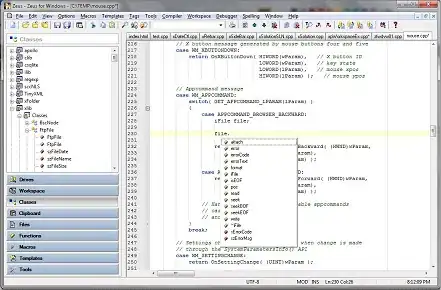

None of this is the case for the code below:

import sklearn.datasets

import lightgbm as lgb

import numpy as np

X, y = sklearn.datasets.load_iris(return_X_y=True)

X, y = X[y <= 1], y[y <= 1]

def loglikelihood(labels, preds):

preds = 1. / (1. + np.exp(-preds))

grad = preds - labels

hess = preds * (1. - preds)

return grad, hess

model = lgb.LGBMClassifier(objective=loglikelihood) # or "binary"

model.fit(X, y, eval_set=[(X, y)], eval_metric="binary_logloss")

lgb.plot_metric(model.evals_result_)

With objective="binary":

With objective=loglikelihood the slope is not even smooth:

Moreover, sigmoid has to be applied to model.predict_proba(X) to get probabilities for loglikelihood (as I have figured out from https://github.com/Microsoft/LightGBM/issues/2136).

Is it possible to get the same behavior with a custom loss function? Does anybody understand where all these differences come from?