I am doing segmentation and my dataset is kinda small (1840 images) so I would like to use data-augmentation. I am using the generator provided in the keras documentation which yield a tuple with a batch of images and corresponding masks that got augmented the same way.

data_gen_args = dict(featurewise_center=True,

featurewise_std_normalization=True,

rotation_range=30,

width_shift_range=0.2,

height_shift_range=0.2,

zoom_range=0.2,

fill_mode='nearest',

horizontal_flip=True)

image_datagen = ImageDataGenerator(**data_gen_args)

mask_datagen = ImageDataGenerator(**data_gen_args)

# Provide the same seed and keyword arguments to the fit and flow methods

seed = 1

image_datagen.fit(X_train, augment=True, seed=seed, rounds=2)

mask_datagen.fit(Y_train, augment=True, seed=seed, rounds=2)

image_generator = image_datagen.flow(X_train,

batch_size=BATCH_SIZE,

seed=seed)

mask_generator = mask_datagen.flow(Y_train,

batch_size=BATCH_SIZE,

seed=seed)

# combine generators into one which yields image and masks

train_generator = zip(image_generator, mask_generator)

I am then training my model with this generator :

model.fit_generator(

generator=train_generator,

steps_per_epoch=m.ceil(len(X_train)/BATCH_SIZE),

validation_data=(X_val, Y_val),

epochs=EPOCHS,

callbacks=callbacks,

workers=4,

use_multiprocessing=True,

verbose=2)

But by using this I get negative loss and the model is not training:

Epoch 2/5000

- 4s - loss: -2.5572e+00 - iou: 0.0138 - acc: 0.0000e+00 - val_loss: 11.8256 - val_iou: 0.0000e+00 - val_acc: 0.1551

I also want to add that the model is training if I don't use featurewise_center and featurewise_std_normalization. But I am using a model with batch normalization that performs way better if the input is normalized so that's why i really would like to use the featurewise parameters.

I hope I explained my problem well and that some of you guys may help me because I really do not understand.

EDIT : My model is a U-Net with custom Conv2D and Conv2DTranspose blocks :

def Conv2D_BN(x, filters, kernel_size, strides=(1,1), padding='same', activation='relu', kernel_initializer='glorot_normal', kernel_regularizer=None):

x = Conv2D(filters, kernel_size=kernel_size, strides=strides, padding=padding, kernel_regularizer=kernel_regularizer)(x)

x = BatchNormalization()(x)

x = Activation(activation)(x)

return x

def Conv2DTranspose_BN(x, filters, kernel_size, strides=(1,1), padding='same', activation='relu', kernel_initializer='glorot_normal', kernel_regularizer=None):

x = Conv2DTranspose(filters, kernel_size=kernel_size, strides=strides, padding=padding, kernel_regularizer=kernel_regularizer)(x)

x = BatchNormalization()(x)

x = Activation(activation)(x)

return x

def build_unet_bn(input_layer = Input((128,128,3)), start_depth=16, activation='relu', initializer='glorot_normal'):

# 128 -> 64

conv1 = Conv2D_BN(input_layer, start_depth * 1, (3, 3), activation=activation, kernel_initializer=initializer)

conv1 = Conv2D_BN(conv1, start_depth * 1, (3, 3), activation=activation, kernel_initializer=initializer)

pool1 = MaxPooling2D((2, 2))(conv1)

# 64 -> 32

conv2 = Conv2D_BN(pool1, start_depth * 2, (3, 3), activation=activation, kernel_initializer=initializer)

conv2 = Conv2D_BN(conv2, start_depth * 2, (3, 3), activation=activation, kernel_initializer=initializer)

pool2 = MaxPooling2D((2, 2))(conv2)

# 32 -> 16

conv3 = Conv2D_BN(pool2, start_depth * 4, (3, 3), activation=activation, kernel_initializer=initializer)

conv3 = Conv2D_BN(conv3, start_depth * 4, (3, 3), activation=activation, kernel_initializer=initializer)

pool3 = MaxPooling2D((2, 2))(conv3)

# 16 -> 8

conv4 = Conv2D_BN(pool3, start_depth * 8, (3, 3), activation=activation, kernel_initializer=initializer)

conv4 = Conv2D_BN(conv4, start_depth * 8, (3, 3), activation=activation, kernel_initializer=initializer)

pool4 = MaxPooling2D((2, 2))(conv4)

# Middle

convm = Conv2D_BN(pool4, start_depth * 16, (3, 3), activation=activation, kernel_initializer=initializer)

convm = Conv2D_BN(convm, start_depth * 16, (3, 3), activation=activation, kernel_initializer=initializer)

# 8 -> 16

deconv4 = Conv2DTranspose_BN(convm, start_depth * 8, (3, 3), strides=(2, 2), activation=activation, kernel_initializer=initializer)

uconv4 = concatenate([deconv4, conv4])

uconv4 = Conv2D_BN(uconv4, start_depth * 8, (3, 3), activation=activation, kernel_initializer=initializer)

uconv4 = Conv2D_BN(uconv4, start_depth * 8, (3, 3), activation=activation, kernel_initializer=initializer)

# 16 -> 32

deconv3 = Conv2DTranspose_BN(uconv4, start_depth * 4, (3, 3), strides=(2, 2), activation=activation, kernel_initializer=initializer)

uconv3 = concatenate([deconv3, conv3])

uconv3 = Conv2D_BN(uconv3, start_depth * 4, (3, 3), activation=activation, kernel_initializer=initializer)

uconv3 = Conv2D_BN(uconv3, start_depth * 4, (3, 3), activation=activation, kernel_initializer=initializer)

# 32 -> 64

deconv2 = Conv2DTranspose_BN(uconv3, start_depth * 2, (3, 3), strides=(2, 2), activation=activation, kernel_initializer=initializer)

uconv2 = concatenate([deconv2, conv2])

uconv2 = Conv2D_BN(uconv2, start_depth * 2, (3, 3), activation=activation, kernel_initializer=initializer)

uconv2 = Conv2D_BN(uconv2, start_depth * 2, (3, 3), activation=activation, kernel_initializer=initializer)

# 64 -> 128

deconv1 = Conv2DTranspose_BN(uconv2, start_depth * 1, (3, 3), strides=(2, 2), activation=activation, kernel_initializer=initializer)

uconv1 = concatenate([deconv1, conv1])

uconv1 = Conv2D_BN(uconv1, start_depth * 1, (3, 3), activation=activation, kernel_initializer=initializer)

uconv1 = Conv2D_BN(uconv1, start_depth * 1, (3, 3), activation=activation, kernel_initializer=initializer)

output_layer = Conv2D(1, (1,1), padding="same", activation="sigmoid")(uconv1)

return output_layer

and I create my model and compile it with :

input_layer=Input((size,size,3))

output_layer = build_unet_bn(input_layer, 16)

model = Model(inputs=input_layer, outputs=output_layer)

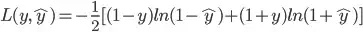

model.compile(optimizer=Adam(lr=1e-3), loss='binary_crossentropy', metrics=metrics)