I created a simple DCGAN with 6 layers and trained it on CelebA dataset (a portion of it containing 30K images).

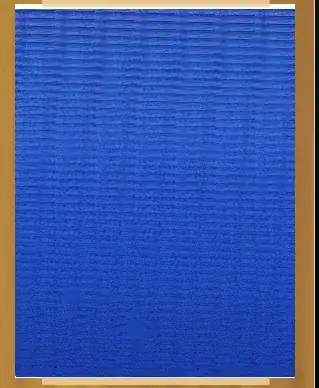

I noticed my network generated images are dimmed looking and as the network trains more, the bright colors fade into dim ones!

here are some example:

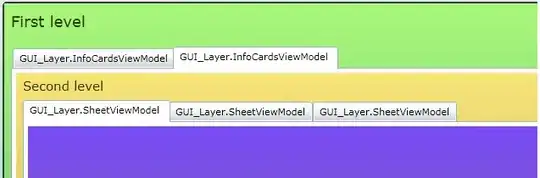

This is how CelebA images look like (real images used for training) :

and these are the generated ones ,the number shows the epoch number(they were trained for 30 epochs ultimately) :

What is the cause for this phenomenon?

I tried to do all the general tricks concerning GANs, such as rescaling the input image between -1 and 1, or not using BatchNorm in the first layer of the Discriminator, and for the last layer of the Generator or

using LeakyReLU(0.2) in the Discriminator, and ReLU for the Generator. yet I have no idea why the images are this dim/dark!

Is this caused by simply less training images?

or is it caused by the networks deficiencies ? if so what is the source of such deficiencies?

Here are how these networks implemented :

def conv_batch(in_dim, out_dim, kernel_size, stride, padding, batch_norm=True):

layers = nn.ModuleList()

conv = nn.Conv2d(in_dim, out_dim, kernel_size, stride, padding, bias=False)

layers.append(conv)

if batch_norm:

layers.append(nn.BatchNorm2d(out_dim))

return nn.Sequential(*layers)

class Discriminator(nn.Module):

def __init__(self, conv_dim=32, act = nn.ReLU()):

super().__init__()

self.conv_dim = conv_dim

self.act = act

self.conv1 = conv_batch(3, conv_dim, 4, 2, 1, False)

self.conv2 = conv_batch(conv_dim, conv_dim*2, 4, 2, 1)

self.conv3 = conv_batch(conv_dim*2, conv_dim*4, 4, 2, 1)

self.conv4 = conv_batch(conv_dim*4, conv_dim*8, 4, 1, 1)

self.conv5 = conv_batch(conv_dim*8, conv_dim*10, 4, 2, 1)

self.conv6 = conv_batch(conv_dim*10, conv_dim*10, 3, 1, 1)

self.drp = nn.Dropout(0.5)

self.fc = nn.Linear(conv_dim*10*3*3, 1)

def forward(self, input):

batch = input.size(0)

output = self.act(self.conv1(input))

output = self.act(self.conv2(output))

output = self.act(self.conv3(output))

output = self.act(self.conv4(output))

output = self.act(self.conv5(output))

output = self.act(self.conv6(output))

output = output.view(batch, self.fc.in_features)

output = self.fc(output)

output = self.drp(output)

return output

def deconv_convtranspose(in_dim, out_dim, kernel_size, stride, padding, batchnorm=True):

layers = []

deconv = nn.ConvTranspose2d(in_dim, out_dim, kernel_size = kernel_size, stride=stride, padding=padding)

layers.append(deconv)

if batchnorm:

layers.append(nn.BatchNorm2d(out_dim))

return nn.Sequential(*layers)

class Generator(nn.Module):

def __init__(self, z_size=100, conv_dim=32):

super().__init__()

self.conv_dim = conv_dim

# make the 1d input into a 3d output of shape (conv_dim*4, 4, 4 )

self.fc = nn.Linear(z_size, conv_dim*4*4*4)#4x4

# conv and deconv layer work on 3d volumes, so we now only need to pass the number of fmaps and the

# input volume size (its h,w which is 4x4!)

self.drp = nn.Dropout(0.5)

self.deconv1 = deconv_convtranspose(conv_dim*4, conv_dim*3, kernel_size =4, stride=2, padding=1)

self.deconv2 = deconv_convtranspose(conv_dim*3, conv_dim*2, kernel_size =4, stride=2, padding=1)

self.deconv3 = deconv_convtranspose(conv_dim*2, conv_dim, kernel_size =4, stride=2, padding=1)

self.deconv4 = deconv_convtranspose(conv_dim, conv_dim, kernel_size =3, stride=2, padding=1)

self.deconv5 = deconv_convtranspose(conv_dim, 3, kernel_size =4, stride=1, padding=1, batchnorm=False)

def forward(self, input):

output = self.fc(input)

output = self.drp(output)

output = output.view(-1, self.conv_dim*4, 4, 4)

output = F.relu(self.deconv1(output))

output = F.relu(self.deconv2(output))

output = F.relu(self.deconv3(output))

output = F.relu(self.deconv4(output))

# we create the image using tanh!

output = F.tanh(self.deconv5(output))

return output

# testing nets

dd = Discriminator()

zd = np.random.rand(2,3,64,64)

zd = torch.from_numpy(zd).float()

# print(dd)

print(dd(zd).shape)

gg = Generator()

z = np.random.uniform(-1,1,size=(2,100))

z = torch.from_numpy(z).float()

print(gg(z).shape)