I am having difficulty understanding the space leak in Hudak's paper "plugging a space leak with arrow" https://www.sciencedirect.com/science/article/pii/S1571066107005919.

1) What exactly does O(n) space complexity mean? The total memory allocated with respect to input size? What about garbage collection along the way?

2) If the definition in 1) holds, how is it that in page 34, they say if dt is constant, the signal type is akin to list type and runs in constant space? Doesn't integralC still create 1 unit of space at each step, totally n units, that is, still O(n)?

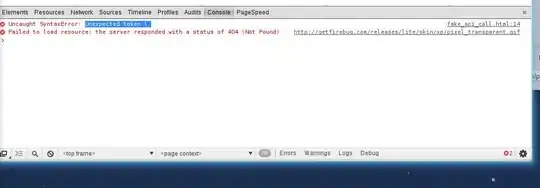

3) I totally do not understand why time complexity is O(n^2). I do have an inkling of what needs to be evaluated (i', i'', i''' in picture below), but how is that O(n^2)?

The image represents the evaluation steps I have drawn in lambda graph notation. Each step sees its structure ADDED to the overall scope rather than REPLACING whatever is in it. Square denotes pointer, so square(i') in step 2 denotes i' block in step 1 for example.