I'm using Kubernetes Continuous Deploy Plugin to deploy and upgrade a Deployment on my Kubernetes Cluster. I'm using pipeline and this is the Jenkinsfile:

pipeline {

environment {

JOB_NAME = "${JOB_NAME}".replace("-deploy", "")

REGISTRY = "my-docker-registry"

}

agent any

stages {

stage('Fetching kubernetes config files') {

steps {

git 'git_url_of_k8s_configurations'

}

}

stage('Deploy on kubernetes') {

steps {

kubernetesDeploy(

kubeconfigId: 'k8s-default-namespace-config-id',

configs: 'deployment.yml',

enableConfigSubstitution: true

)

}

}

}

}

Deployment.yml instead is:

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: ${JOB_NAME}

spec:

replicas: 1

template:

metadata:

labels:

build_number: ${BUILD_NUMBER}

app: ${JOB_NAME}

role: rolling-update

spec:

containers:

- name: ${JOB_NAME}-container

image: ${REGISTRY}/${JOB_NAME}:latest

ports:

- containerPort: 8080

envFrom:

- configMapRef:

name: postgres

imagePullSecrets:

- name: regcred

strategy:

type: RollingUpdate

In order to let Kubernetes understand that Deployment is changed ( so to upgrade it and pods ) I used the Jenkins build number as annotation:

...

metadata:

labels:

build_number: ${BUILD_NUMBER}

...

The problem or my misunderstanding:

If Deployment does not exists on Kubernetes, all works good, creating one Deployment and one ReplicaSet.

If Deployment still exists and an upgrade is applied, Kubernetes creates a new ReplicaSet:

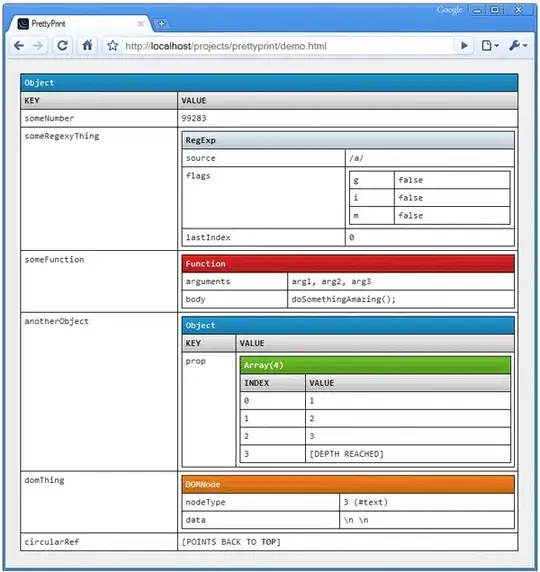

Before first deploy

First deploy

Second deploy

Third deploy

As you can see, each new Jenkins deploy will update corretly the deployment but creates a new ReplicaSet without removing the old one.

What could be the issue?