I'm trying to transfer ca. 10GB of json data (tweets in my case) to a collection in arangodb. I'm also trying to use joblib for it:

from ArangoConn import ArangoConn

import Userdata as U

import encodings

from joblib import Parallel,delayed

import json

from glob import glob

import time

def progress(total, prog, start, stri = ""):

if(prog == 0):

print("")

prog = 1;

perc = prog / total

diff = time.time() - start

rem = (diff / prog) * (total - prog)

bar = ""

for i in range(0,int(perc*20)):

bar = bar + "|"

for i in range(int(perc*20),20):

bar = bar + " "

print("\r"+"progress: " + "[" + bar + "] " + str(prog) + " of " +

str(total) + ": {0:.1f}% ".format(perc * 100) + "- " +

time.strftime("%H:%M:%S", time.gmtime(rem)) + " " + stri, end="")

def processfile(filepath):

file = open(filepath,encoding='utf-8')

s = file.read()

file.close()

data = json.loads(s)

Parallel(n_jobs=12, verbose=0, backend="threading"

(map(delayed(ArangoConn.createDocFromObject), data))

files = glob(U.path+'/*.json')

i = 1

j = len(files)

starttime = time.time()

for f in files:

progress(j,i,starttime,f)

i = i+1

processfile(f)

and

from pyArango.connection import Connection

import Userdata as U

import time

class ArangoConn:

def __init__(self,server,user,pw,db,collectionname):

self.server = server

self.user = user

self.pw = pw

self.db = db

self.collectionname = collectionname

self.connection = None

self.dbHandle = self.connect()

if not self.dbHandle.hasCollection(name=self.collectionname):

coll = self.dbHandle.createCollection(name=collectionname)

else:

coll = self.dbHandle.collections[collectionname]

self.collection = coll

def db_createDocFromObject(self, obj):

data = obj.__dict__()

doc = self.collection.createDocument()

for key,value in data.items():

doc[key] = value

doc._key= str(int(round(time.time() * 1000)))

doc.save()

def connect(self):

self.connection = Connection(arangoURL=self.server + ":8529",

username=self.user, password=self.pw)

if not self.connection.hasDatabase(self.db):

db = self.connection.createDatabase(name=self.db)

else:

db = self.connection.databases.get(self.db)

return db

def disconnect(self):

self.connection.disconnectSession()

def getAllData(self):

docs = []

for doc in self.collection.fetchAll():

docs.append(self.doc_to_result(doc))

return docs

def addData(self,obj):

self.db_createDocFromObject(obj)

def search(self,collection,search,prop):

docs = []

aql = """FOR q IN """+collection+""" FILTER q."""+prop+""" LIKE

"%"""+search+"""%" RETURN q"""

results = self.dbHandle.AQLQuery(aql, rawResults=False, batchSize=1)

for doc in results:

docs.append(self.doc_to_result(doc))

return docs

def doc_to_result(self,arangodoc):

modstore = arangodoc.getStore()

modstore["_key"] = arangodoc._key

return modstore

def db_createDocFromJson(self,json):

for d in json:

doc = self.collection.createDocument()

for key,value in d.items():

doc[key] = value

doc._key = str(int(round(time.time() * 1000)))

doc.save()

@staticmethod

def createDocFromObject(obj):

c = ArangoConn(U.url, U.user, U.pw, U.db, U.collection)

data = obj

doc = c.collection.createDocument()

for key, value in data.items():

doc[key] = value

doc._key = doc["id"]

doc.save()

c.connection.disconnectSession()

It kinda works like that. My problem is that the data that lands in the database is somehow mixed up.

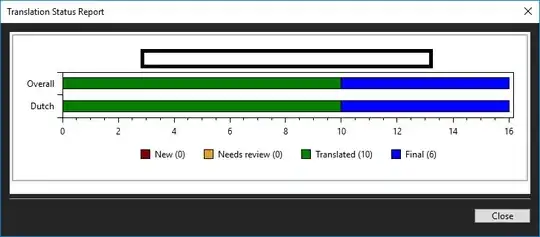

as you can see in the screenshot "id" and "id_str" are not the same - as they should be.

what i investigated so far:

I thought that at some points the default keys in the databese may "collide" because of the threading so I set the key to the tweet id.

I tried to do it without multiple threads. the threading doesn't seem to be the problem

I looked at the data I send to the database... everything seems to be fine

But as soon as I communicate with the db the data mixes up.

My professor thought that maybe something in pyarango isn't threadsafe and it messes up the data but I don't think so as threading doesn't seem to be the problem.

I have no ideas left where this behavior could come from... Any ideas?