I'm trying to record my app's screen using ReplayKit, cropping out some parts of it while recording the video. Not quite going well.

ReplayKit will capture the entire screen, so I decided to receive each frame from ReplayKit (as CMSampleBuffer via startCaptureWithHandler), crop it there and feed it to a video writer via AVAssetWriterInputPixelBufferAdaptor. But I am having a trouble in hard-copying the image buffer before cropping it.

This is my working code that records the entire screen:

// Starts recording with a completion/error handler

-(void)startRecordingWithHandler: (RPHandler)handler

{

// Sets up AVAssetWriter that will generate a video file from the recording.

self.writer = [AVAssetWriter assetWriterWithURL:self.outputFileURL

fileType:AVFileTypeQuickTimeMovie

error:nil];

NSDictionary* outputSettings =

@{

AVVideoWidthKey : @(screen.size.width), // The whole width of the entire screen.

AVVideoHeightKey : @(screen.size.height), // The whole height of the entire screen.

AVVideoCodecKey : AVVideoCodecTypeH264,

};

// Sets up AVAssetWriterInput that will feed ReplayKit's frame buffers to the writer.

self.videoInput = [AVAssetWriterInput assetWriterInputWithMediaType:AVMediaTypeVideo

outputSettings:outputSettings];

// Lets it know that the input will be realtime using ReplayKit.

[self.videoInput setExpectsMediaDataInRealTime:YES];

NSDictionary* sourcePixelBufferAttributes =

@{

(NSString*) kCVPixelBufferPixelFormatTypeKey: @(kCVPixelFormatType_32BGRA),

(NSString*) kCVPixelBufferWidthKey : @(screen.size.width),

(NSString*) kCVPixelBufferHeightKey : @(screen.size.height),

};

// Adds the video input to the writer.

[self.writer addInput:self.videoInput];

// Sets up ReplayKit itself.

self.recorder = [RPScreenRecorder sharedRecorder];

// Arranges the pipleline from ReplayKit to the input.

RPBufferHandler bufferHandler = ^(CMSampleBufferRef sampleBuffer, RPSampleBufferType bufferType, NSError* error) {

[self captureSampleBuffer:sampleBuffer withBufferType:bufferType];

};

RPHandler errorHandler = ^(NSError* error) {

if (error) handler(error);

};

// Starts ReplayKit's recording session.

// Sample buffers will be sent to `captureSampleBuffer` method.

[self.recorder startCaptureWithHandler:bufferHandler completionHandler:errorHandler];

}

// Receives a sample buffer from ReplayKit every frame.

-(void)captureSampleBuffer:(CMSampleBufferRef)sampleBuffer withBufferType:(RPSampleBufferType)bufferType

{

// Uses a queue in sync so that the writer-starting logic won't be invoked twice.

dispatch_sync(dispatch_get_main_queue(), ^{

// Starts the writer if not started yet. We do this here in order to get the proper source time later.

if (self.writer.status == AVAssetWriterStatusUnknown) {

[self.writer startWriting];

return;

}

// Receives a sample buffer from ReplayKit.

switch (bufferType) {

case RPSampleBufferTypeVideo:{

// Initializes the source time when a video frame buffer is received the first time.

// This prevents the output video from starting with blank frames.

if (!self.startedWriting) {

NSLog(@"self.writer startSessionAtSourceTime");

[self.writer startSessionAtSourceTime:CMSampleBufferGetPresentationTimeStamp(sampleBuffer)];

self.startedWriting = YES;

}

// Appends a received video frame buffer to the writer.

[self.input append:sampleBuffer];

break;

}

}

});

}

// Stops the current recording session, and saves the output file to the user photo album.

-(void)stopRecordingWithHandler:(RPHandler)handler

{

// Closes the input.

[self.videoInput markAsFinished];

// Finishes up the writer.

[self.writer finishWritingWithCompletionHandler:^{

handler(self.writer.error);

// Saves the output video to the user photo album.

[[PHPhotoLibrary sharedPhotoLibrary] performChanges: ^{ [PHAssetChangeRequest creationRequestForAssetFromVideoAtFileURL: self.outputFileURL]; }

completionHandler: ^(BOOL s, NSError* e) { }];

}];

// Stops ReplayKit's recording.

[self.recorder stopCaptureWithHandler:nil];

}

where each sample buffer from ReplayKit will be directly fed to the writer (in captureSampleBuffer method), hence records the entire screen.

Then, I replaced the part with an identical logic using AVAssetWriterInputPixelBufferAdaptor, which works just fine:

...

case RPSampleBufferTypeVideo:{

... // Initializes source time.

// Gets the timestamp of the sample buffer.

CMTime time = CMSampleBufferGetPresentationTimeStamp(sampleBuffer);

// Extracts the pixel image buffer from the sample buffer.

CVPixelBufferRef imageBuffer = CMSampleBufferGetImageBuffer(sampleBuffer);

// Appends a received sample buffer as an image buffer to the writer via the adaptor.

[self.videoAdaptor appendPixelBuffer:imageBuffer withPresentationTime:time];

break;

}

...

where the adaptor is set up as:

NSDictionary* sourcePixelBufferAttributes =

@{

(NSString*) kCVPixelBufferPixelFormatTypeKey: @(kCVPixelFormatType_32BGRA),

(NSString*) kCVPixelBufferWidthKey : @(screen.size.width),

(NSString*) kCVPixelBufferHeightKey : @(screen.size.height),

};

self.videoAdaptor = [AVAssetWriterInputPixelBufferAdaptor assetWriterInputPixelBufferAdaptorWithAssetWriterInput:self.videoInput

sourcePixelBufferAttributes:sourcePixelBufferAttributes];

So the pipeline is working.

Then, I created a hard copy of the image buffer in the main memory and feed it to the adaptor:

...

case RPSampleBufferTypeVideo:{

... // Initializes source time.

// Gets the timestamp of the sample buffer.

CMTime time = CMSampleBufferGetPresentationTimeStamp(sampleBuffer);

// Extracts the pixel image buffer from the sample buffer.

CVPixelBufferRef imageBuffer = CMSampleBufferGetImageBuffer(sampleBuffer);

// Hard-copies the image buffer.

CVPixelBufferRef copiedImageBuffer = [self copy:imageBuffer];

// Appends a received video frame buffer to the writer via the adaptor.

[self.adaptor appendPixelBuffer:copiedImageBuffer withPresentationTime:time];

break;

}

...

// Hard-copies the pixel buffer.

-(CVPixelBufferRef)copy:(CVPixelBufferRef)inputBuffer

{

// Locks the base address of the buffer

// so that GPU won't change the data until unlocked later.

CVPixelBufferLockBaseAddress(inputBuffer, 0); //-------------------------------

char* baseAddress = (char*)CVPixelBufferGetBaseAddress(inputBuffer);

size_t bytesPerRow = CVPixelBufferGetBytesPerRow(inputBuffer);

size_t width = CVPixelBufferGetWidth(inputBuffer);

size_t height = CVPixelBufferGetHeight(inputBuffer);

size_t length = bytesPerRow * height;

// Mallocs the same length as the input buffer for copying.

char* outputAddress = (char*)malloc(length);

// Copies the input buffer's data to the malloced space.

for (int i = 0; i < length; i++) {

outputAddress[i] = baseAddress[i];

}

// Create a new image buffer using the copied data.

CVPixelBufferRef outputBuffer;

CVPixelBufferCreateWithBytes(kCFAllocatorDefault,

width,

height,

kCVPixelFormatType_32BGRA,

outputAddress,

bytesPerRow,

&releaseCallback, // Releases the malloced space.

NULL,

NULL,

&outputBuffer);

// Unlocks the base address of the input buffer

// So that GPU can restart using the data.

CVPixelBufferUnlockBaseAddress(inputBuffer, 0); //-------------------------------

return outputBuffer;

}

// Releases the malloced space.

void releaseCallback(void *releaseRefCon, const void *baseAddress)

{

free((void *)baseAddress);

}

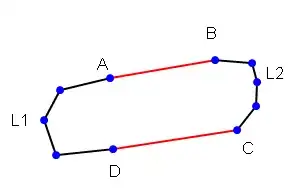

This doesn't work -- the saved video will look like the screenshot on the right hand:

Seems like bytes per row and the color format are wrong. I have researched and experimented with the followings, but not avail:

- Hard-coding

4 * widthfor bytes per row -> "bad access". - Using

intanddoubleinstead ofchar-> some weird debugger-terminating exceptions. - Using other image formats -> either "not supported" or access errors.

Additionally, the releaseCallback is never called -- the ram will run out in 10 seconds of recording.

What are potential causes from the look of this output?