I am trying to train a very deep model on Cloud ML however i am having serious memory issues that i am not managing to go around. The model is a very deep convolutional neural network to auto-tag music.

The model for this can be found in the image below. A batch of 20 with a tensor of 12x38832x1 is inserted in the network.

The music was originally 465894x1 samples which was then split into 12 windows. Hence, 12x38832x1. When using the map_fn function each loop would have the seperate 38832x1 samples (conv1d).

Processing windows at a time yields better results than the whole music using one CNN. This was split prior to storing the data in TFRecords in order to minimise the needed processing during training. This is loaded in a queue with maximum queue size of 200 samples (ie 10 batches).

Once dequeue, it is transposed to have the 12 dimension first which then can be used in the map_fn function for processing of the windows. This is not transposed prior to being queued as the first dimension needs to match the batch dimension of the output which is [20, 50]. Where 20 is the batch size as the data and 50 are the different tags.

For each window, the data is processed and the results of each map_fn are superpooled using a smaller network. The processing of the windows is done by a very deep neural network which is giving me problems to keep as all the config options i am giving are giving me out of memory errors.

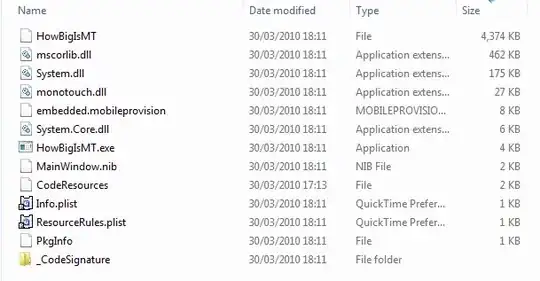

As a model i am using one similar to Census Tensorflow Model.

First and foremost, i am not sure if this is the best option since for evaluation a separate graph is built and not shared variables. This would require double the amount of parameters.

Secondly, as a cluster setup, i have been using one complex_l master, 3 complex_l workers and 3 large_model parameter servers. I do not know if am underestimating the amount of memory needed here.

My model has previously worked with a much smaller network. However, increasing it in size started giving me bad out of memory errors.

My questions are:

The memory requirement is big, but i am sure it can be processed on cloud ml. Am i underestimating the amount of memory needed? What are your suggestions about the cluster for such a network?

When using a train.server in the dispatch function, do you need to pass on the cluster_spec so it is used in the replica_device setter? Or does it allocate on it's own? When not using it, and setting tf.configProto of log placement, all the variables seem to be on the master worker. On the Census Example in the task.py this is not passed on. I can assume this is correct?

How does one calculate how much memory is needed for a model (rough estimate to select the cluster)?

Is there any other tensorflow core tutorial how to setup such big jobs? (other than Census)

When training a big model in distributed between-graph replication, does all the model need to fit on the worker, or the worker only does ops and then transmits the results to the PS. Does that mean that the workers can have low memory just for singular ops?

PS: With smaller models the network trained successfully. I am trying to deepen the network for better ROC.

Questions coming up from on-going troubleshooting:

When using the replica_device_setter with the parameter cluster, i noticed that the master has very little memory and CPU usage and checking the log placement there are very little ops on the master. I checked the TF_CONFIG that is loaded and it says the following for the cluster field:

u'cluster': {u'ps': [u'ps-4da746af4e-0:2222'], u'worker': [u'worker-4da746af4e-0:2222'], u'master': [u'master-4da746af4e-0:2222']}

On the other hand, in the tf.train.Clusterspec documentation, it only shows workers. Does that mean that the master is not considered as worker? What happens in such case?

Error is it Memory or something else? EOF Error?