I have a neural network which is organized as follows:

conv1 - pool1 - local reponse normalization (lrn2) - conv2 - lrn2 - pool2 -

conv3 - pool3 - conv4 - pool4 - conv5 - pool5 - dense layer (local1) -

local2 - softmax

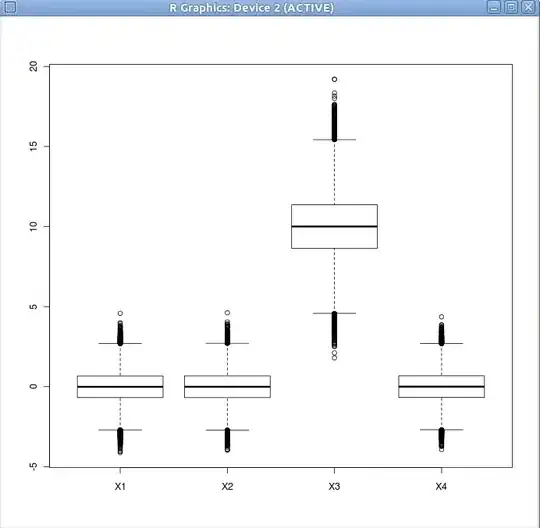

After looking into the tensorboard's distributions, I got the following:

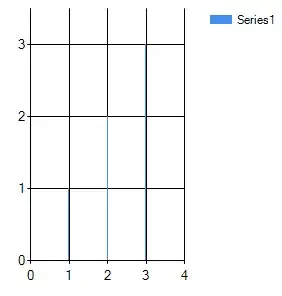

The following figure are the output of the activations over time.

The following figure are the output of the activations over time.

Therefore, from the loss figure, it is clear that the network is learning. In addition, all of the biases shows as the well that they are modified as a result of learning. But what about the weights, it looks like they haven't changed with time? Is it logical what I am getting from its figures? Please note that I have posted only a subset of the images for the weights and biases in the graph. All weight's figures are similar to what I have presented here, and likewise for the biases Biases appear to learn, while weights do not!!

Therefore, from the loss figure, it is clear that the network is learning. In addition, all of the biases shows as the well that they are modified as a result of learning. But what about the weights, it looks like they haven't changed with time? Is it logical what I am getting from its figures? Please note that I have posted only a subset of the images for the weights and biases in the graph. All weight's figures are similar to what I have presented here, and likewise for the biases Biases appear to learn, while weights do not!!

Here is the how I constructed the graph:

# Parameters

learning_rate = 0.0001

batch_size = 1024

n_classes = 1 # 1 since we need the value of the retrainer.

weights = {

'weights_conv1': tf.get_variable(name='weights1', shape=[5, 5, 3, 128], dtype=tf.float32,

initializer=tf.contrib.layers.xavier_initializer_conv2d(uniform=False, dtype=tf.float32)),

'weights_conv2': tf.get_variable(name='weights2', shape=[3, 3, 128, 128], dtype=tf.float32,

initializer=tf.contrib.layers.xavier_initializer_conv2d(uniform=False, dtype=tf.float32)),

'weights_conv3': tf.get_variable(name='weights3', shape=[3, 3, 128, 256], dtype=tf.float32,

initializer=tf.contrib.layers.xavier_initializer_conv2d(uniform=False, dtype=tf.float32)),

'weights_conv4': tf.get_variable(name='weights4', shape=[3, 3, 256, 256], dtype=tf.float32,

initializer=tf.contrib.layers.xavier_initializer_conv2d(uniform=False, dtype=tf.float32)),

'weights_conv5': tf.get_variable(name='weights5', shape=[3, 3, 256, 256], dtype=tf.float32,

initializer=tf.contrib.layers.xavier_initializer_conv2d(uniform=False, dtype=tf.float32)),

}

biases = {

'bc1': tf.Variable(tf.constant(0.1, shape=[128], dtype=tf.float32), trainable=True, name='biases1'),

'bc2': tf.Variable(tf.constant(0.1, shape=[128], dtype=tf.float32), trainable=True, name='biases2'),

'bc3': tf.Variable(tf.constant(0.1, shape=[256], dtype=tf.float32), trainable=True, name='biases3'),

'bc4': tf.Variable(tf.constant(0.1, shape=[256], dtype=tf.float32), trainable=True, name='biases4'),

'bc5': tf.Variable(tf.constant(0.1, shape=[256], dtype=tf.float32), trainable=True, name='biases5')

}

def inference(frames):

# frames = tf.Print(frames, data=[tf.shape(frames)], message='f size is:')

tf.summary.image('frame_resized', frames, max_outputs=32)

frame_normalized_sub = tf.subtract(frames, tf.constant(128, dtype=tf.float32))

frame_normalized = tf.divide(frame_normalized_sub, tf.constant(255.0), name='image_normalization')

# conv1

with tf.name_scope('conv1') as scope:

conv_2d_1 = tf.nn.conv2d(frame_normalized, weights['weights_conv1'], strides=[1, 4, 4, 1], padding='SAME')

conv_2d_1_plus_bias = tf.nn.bias_add(conv_2d_1, biases['bc1'])

conv1 = tf.nn.relu(conv_2d_1_plus_bias, name=scope)

tf.summary.histogram('con1_output_distribution', conv1)

tf.summary.histogram('con1_before_relu', conv_2d_1_plus_bias)

# norm1

with tf.name_scope('norm1'):

norm1 = tf.nn.lrn(conv1, 4, bias=1.0, alpha=0.001 / 9.0, beta=0.75, name='norm1')

tf.summary.histogram('norm1_output_distribution', norm1)

# pool1

with tf.name_scope('pool1') as scope:

pool1 = tf.nn.max_pool(norm1,

ksize=[1, 3, 3, 1],

strides=[1, 2, 2, 1],

padding='VALID',

name='pool1')

tf.summary.histogram('pool1_output_distribution', pool1)

# conv2

with tf.name_scope('conv2') as scope:

conv_2d_2 = tf.nn.conv2d(pool1, weights['weights_conv2'], strides=[1, 1, 1, 1], padding='SAME')

conv_2d_2_plus_bias = tf.nn.bias_add(conv_2d_2, biases['bc2'])

conv2 = tf.nn.relu(conv_2d_2_plus_bias, name=scope)

tf.summary.histogram('conv2_output_distribution', conv2)

tf.summary.histogram('con2_before_relu', conv_2d_2_plus_bias)

# norm2

with tf.name_scope('norm2'):

norm2 = tf.nn.lrn(conv2, 4, bias=1.0, alpha=0.001 / 9.0, beta=0.75,

name='norm2')

tf.summary.histogram('norm2_output_distribution', norm2)

# pool2

with tf.name_scope('pool2'):

pool2 = tf.nn.max_pool(norm2,

ksize=[1, 3, 3, 1],

strides=[1, 2, 2, 1],

padding='VALID',

name='pool2')

tf.summary.histogram('pool2_output_distribution', pool2)

# conv3

with tf.name_scope('conv3') as scope:

conv_2d_3 = tf.nn.conv2d(pool2, weights['weights_conv3'], strides=[1, 1, 1, 1], padding='SAME')

conv_2d_3_plus_bias = tf.nn.bias_add(conv_2d_3, biases['bc3'])

conv3 = tf.nn.relu(conv_2d_3_plus_bias, name=scope)

tf.summary.histogram('con3_output_distribution', conv3)

tf.summary.histogram('con3_before_relu', conv_2d_3_plus_bias)

# conv4

with tf.name_scope('conv4') as scope:

conv_2d_4 = tf.nn.conv2d(conv3, weights['weights_conv4'], strides=[1, 1, 1, 1], padding='SAME')

conv_2d_4_plus_bias = tf.nn.bias_add(conv_2d_4, biases['bc4'])

conv4 = tf.nn.relu(conv_2d_4_plus_bias, name=scope)

tf.summary.histogram('con4_output_distribution', conv4)

tf.summary.histogram('con4_before_relu', conv_2d_4_plus_bias)

# conv5

with tf.name_scope('conv5') as scope:

conv_2d_5 = tf.nn.conv2d(conv4, weights['weights_conv5'], strides=[1, 1, 1, 1], padding='SAME')

conv_2d_5_plus_bias = tf.nn.bias_add(conv_2d_5, biases['bc5'])

conv5 = tf.nn.relu(conv_2d_5_plus_bias, name=scope)

tf.summary.histogram('con5_output_distribution', conv5)

tf.summary.histogram('con5_before_relu', conv_2d_5_plus_bias)

# pool3

pool3 = tf.nn.max_pool(conv5,

ksize=[1, 3, 3, 1],

strides=[1, 2, 2, 1],

padding='VALID',

name='pool5')

tf.summary.histogram('pool3_output_distribution', pool3)

# local1

with tf.variable_scope('local1') as scope:

# Move everything into depth so we can perform a single matrix multiply.

shape_d = pool3.get_shape()

shape = shape_d[1] * shape_d[2] * shape_d[3]

# tf_shape = tf.stack(shape)

tf_shape = 1024

print("shape:", shape, shape_d[1], shape_d[2], shape_d[3])

reshape = tf.reshape(pool3, [-1, tf_shape])

weight_local1 = \

tf.get_variable(name='weight_local1', shape=[tf_shape, 2046], dtype=tf.float32,

initializer=tf.contrib.layers.xavier_initializer_conv2d(uniform=False, dtype=tf.float32))

bias_local1 = tf.Variable(tf.constant(0.1, tf.float32, [2046]), trainable=True, name='bias_local1')

local1_before_relu = tf.matmul(reshape, weight_local1) + bias_local1

local1 = tf.nn.relu(local1_before_relu, name=scope.name)

tf.summary.histogram('local1_output_distribution', local1)

tf.summary.histogram('local1_before_relu', local1_before_relu)

tf.summary.histogram('local1_weights', weight_local1)

tf.summary.histogram('local1_biases', bias_local1)

# local2

with tf.variable_scope('local2') as scope:

# Move everything into depth so we can perform a single matrix multiply.

weight_local2 = \

tf.get_variable(name='weight_local2', shape=[2046, 2046], dtype=tf.float32,

initializer=tf.contrib.layers.xavier_initializer_conv2d(uniform=False, dtype=tf.float32))

bias_local2 = tf.Variable(tf.constant(0.1, tf.float32, [2046]), trainable=True, name='bias_local2')

local2_before_relu = tf.matmul(local1, weight_local2) + bias_local2

local2 = tf.nn.relu(local2_before_relu, name=scope.name)

tf.summary.histogram('local2_output_distribution', local2)

tf.summary.histogram('local2_before_relu', local2_before_relu)

tf.summary.histogram('local2_weights', weight_local2)

tf.summary.histogram('local2_biases', bias_local2)

# linear Wx + b

with tf.variable_scope('softmax_linear') as scope:

weight_softmax = \

tf.Variable(

tf.truncated_normal([2046, n_classes], stddev=1 / 1024, dtype=tf.float32), name='weight_softmax')

bias_softmax = tf.Variable(tf.constant(0.0, tf.float32, [n_classes]), trainable=True, name='bias_softmax')

softmax_linear = tf.add(tf.matmul(local2, weight_softmax), bias_softmax, name=scope.name)

tf.summary.histogram('softmax_output_distribution', softmax_linear)

tf.summary.histogram('softmax_weights', weight_softmax)

tf.summary.histogram('softmax_biases', bias_softmax)

tf.summary.histogram('weights_conv1', weights['weights_conv1'])

tf.summary.histogram('weights_conv2', weights['weights_conv2'])

tf.summary.histogram('weights_conv3', weights['weights_conv3'])

tf.summary.histogram('weights_conv4', weights['weights_conv4'])

tf.summary.histogram('weights_conv5', weights['weights_conv5'])

tf.summary.histogram('biases_conv1', biases['bc1'])

tf.summary.histogram('biases_conv2', biases['bc2'])

tf.summary.histogram('biases_conv3', biases['bc3'])

tf.summary.histogram('biases_conv4', biases['bc4'])

tf.summary.histogram('biases_conv5', biases['bc5'])

return softmax_linear

# Note that this is the RMSE

with tf.name_scope('loss'):

# Note that the dimension of cost is [batch_size, 1]. Every example has one output and a batch

# is a number of examples.

cost = tf.sqrt(tf.square(tf.subtract(predictions, y_valence)))

cost_scalar = tf.reduce_mean(tf.multiply(cost, confidence_holder), reduction_indices=0)

# Till here cost_scolar will have the following shape: [[#num]]... That is why I used cost_scalar[0]

tf.summary.scalar("loss", cost_scalar[0])

with tf.name_scope('train'):

optimizer = tf.train.AdamOptimizer(learning_rate).minimize(cost_scalar)

Any help is much appreciated!!