The solution depends on what you want to do. Here are a few options:

Lower priorities of processes

You can nice the subprocesses. This way, though they will still eat 100% of the CPU, when you start other applications, the OS gives preference to the other applications. If you want to leave a work intensive computation run on the background of your laptop and don't care about the CPU fan running all the time, then setting the nice value with psutils is your solution. This script is a test script which runs on all cores for enough time so you can see how it behaves.

from multiprocessing import Pool, cpu_count

import math

import psutil

import os

def f(i):

return math.sqrt(i)

def limit_cpu():

"is called at every process start"

p = psutil.Process(os.getpid())

# set to lowest priority, this is windows only, on Unix use ps.nice(19)

p.nice(psutil.BELOW_NORMAL_PRIORITY_CLASS)

if __name__ == '__main__':

# start "number of cores" processes

pool = Pool(None, limit_cpu)

for p in pool.imap(f, range(10**8)):

pass

The trick is that limit_cpu is run at the beginning of every process (see initializer argment in the doc). Whereas Unix has levels -19 (highest prio) to 19 (lowest prio), Windows has a few distinct levels for giving priority. BELOW_NORMAL_PRIORITY_CLASS probably fits your requirements best, there is also IDLE_PRIORITY_CLASS which says Windows to run your process only when the system is idle.

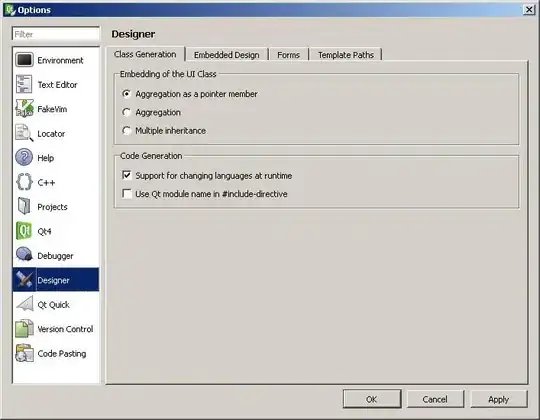

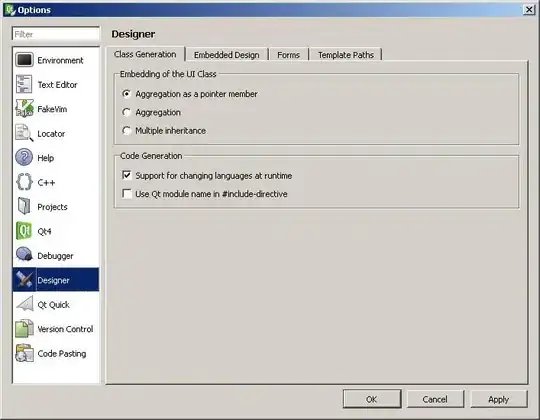

You can view the priority if you switch to detail mode in Task Manager and right click on the process:

Lower number of processes

Although you have rejected this option it still might be a good option: Say you limit the number of subprocesses to half the cpu cores using pool = Pool(max(cpu_count()//2, 1)) then the OS initially runs those processes on half the cpu cores, while the others stay idle or just run the other applications currently running. After a short time, the OS reschedules the processes and might move them to other cpu cores etc. Both Windows as Unix based systems behave this way.

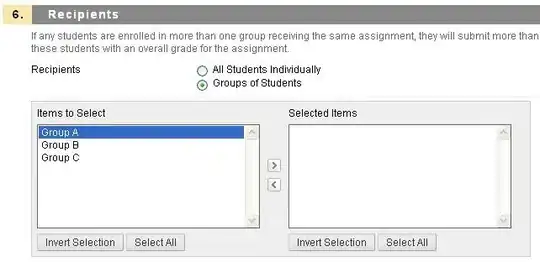

Windows: Running 2 processes on 4 cores:

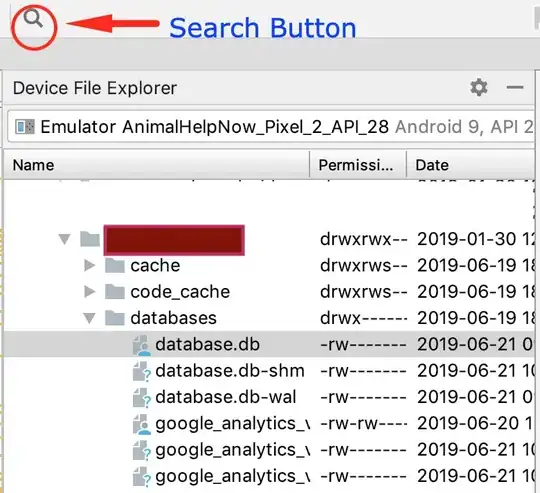

OSX: Running 4 processes on 8 cores:

You see that both OS balance the process between the cores, although not evenly so you still see a few cores with higher percentages than others.

Sleep

If you absolutely want to go sure, that your processes never eat 100% of a certain core (e.g. if you want to prevent that the cpu fan goes up), then you can run sleep in your processing function:

from time import sleep

def f(i):

sleep(0.01)

return math.sqrt(i)

This makes the OS "schedule out" your process for 0.01 seconds for each computation and makes room for other applications. If there are no other applications, then the cpu core is idle, thus it will never go to 100%. You'll need to play around with different sleep durations, it will also vary from computer to computer you run it on. If you want to make it very sophisticated you could adapt the sleep depending on what cpu_times() reports.