I'm just wondering if there is some python code or magics I can execute that will restart the ipython cluster. It seems like every time I change my code, it needs to be restarted.

-

I'd wonder if there is a notebook extension that could do this but I'd have doubts about code running inside the notebook that restarts it, could result in an infinite loop of running-restarting. – Tadhg McDonald-Jensen Dec 22 '16 at 15:22

2 Answers

A simple idea, seems too "hacky" for production:

Setup the Client and define a simple function for testing.

import ipyparallel as ipp

c = ipp.Client()

dv = c[:]

# simple function

@dv.remote(block=True)

def getpid():

import os

return os.getpid()

getpid()

[1994, 1995, 1998, 2001]

Define a function to restart the cluster. shutdown with targets='all' and hub=True should kill the entire cluster. Then start a new cluster with ! or %sx magic command.

import time

def restart_ipcluster(client):

client.shutdown(targets='all', hub=True)

time.sleep(5) # give the cluster a few seconds to shutdown

# include other args as necessary

!ipcluster start -n4 --daemonize

time.sleep(60) # give cluster ~min to start

return ipp.Client() # with keyword args as necessary

One drawback to this approach is that the DirectView needs to be re-assigned and any function decorated with dv.remote or dv.parallel needs to be re-executed.

c = restart_ipcluster(c)

dv = c[:]

@dv.remote(block=True)

def getpid():

import os

return os.getpid()

getpid()

[3620, 3621, 3624, 3627]

Reading the source for ipyparallel Client, the shutdown method mentioned above has a keyword argument, restart=False , but it's currently not implemented. Maybe the devs are working on a reliable method.

- 7,960

- 5

- 36

- 57

-

1Thanks for the suggestion; I'll give it a go. Is there a reason why, by design, the main notebook has no process control? – cjm2671 Dec 29 '16 at 14:15

One can start/stop an IPython cluster using the class Cluster.

Class representing an IPP cluster.

i.e. one controller and one or more groups of engines.

Can start/stop/monitor/poll cluster resources.

All async methods can be called synchronously with a_syncsuffix, e.g.cluster.start_cluster_sync()

Here's a demo:

Start the cluster and check if it's running

import ipyparallel as ipp

cluster = ipp.Cluster(n=4)

# start cluster asyncronously (or cluster.start_cluster_sync() without await)

await cluster.start_cluster()

# Using existing profile dir: '/Users/X/.ipython/profile_default'

# Starting 4 engines with <class 'ipyparallel.cluster.launcher.LocalEngineSetLauncher'>

# <Cluster(cluster_id='1648978529-rzr8', profile='default', controller=<running>, engine_sets=['1648978530'])>

rc = ipp.Client(cluster_id='1648978529-rzr8')

cluster._is_running()

# True

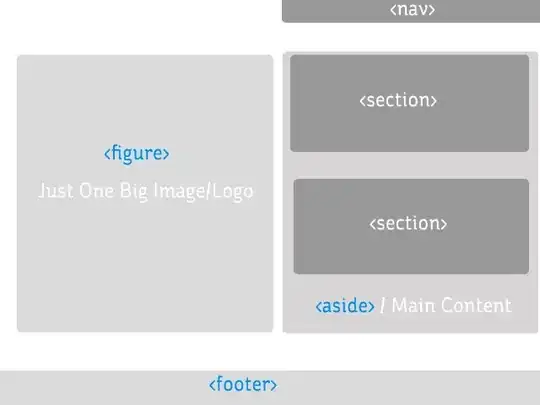

This can be also seen in the Clusters tab of the Jupyter notebook:

Stop the cluster

# stop cluster syncronously

cluster.stop_cluster_sync()

cluster._is_running()

# False

Restart the cluster

# start cluster syncronously

cluster.start_cluster_sync()

cluster._is_running()

# True

Use cluster as a context manager

A cluster can be also used as a context manager using a with-statement (https://ipyparallel.readthedocs.io/en/latest/examples/Cluster%20API.html#Cluster-as-a-context-manager):

import os

with ipp.Cluster(n=4) as rc:

engine_pids = rc[:].apply_async(os.getpid).get_dict()

engine_pids

This way the cluster will only exist for the duration of the computation.

See also my other answer.

- 27,088

- 20

- 102

- 114