I set up a new Hadoop Cluster (containing 6 machines) with HDP 2.5. The Installation worked well and the first minutes everything seemed to work properly. But after a few minutes, two of the HBase services stopped working:

- HBase / host1.mydomain.de

- HBase Master Process: Connection failed [Errno 111] Connection refuced to host1.mydomain.de

- HBase / host6.mydomain.de

- HBase RegionServer Process: Connection failed [Errno 111] Connection refused to host6.mydomain.de

As I googled around for this issue, I found these tips:

- check and enable NTPD (enabled before installation, still disabled)

- check and disable Firewall (disabled before installation, still disabled)

- check and disable SELinux (disabled before installation, still disabled)

The point is, that all services were running at the beginning, so the services listed above should be configured correctly!

I can say the following to my cluster configuration:

- the Ambari-Server host (host1) can reach all oher hosts by ping and can connect password-less per SSH

- installed components are HDFS, YARN, MR2, Tez, Hive, HBase, Pig, ZooKeeper, AmbariMetrics, Knox, Spark, Slider

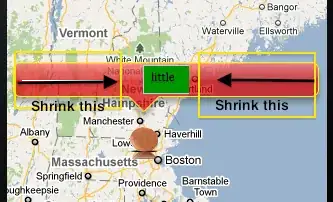

- I left all the default settings during installation, and I have ignored the following warning:

The log file /var/log/hbase/hbase-hbase-master-host1.domain.de.log contains the followng snippet (IP addresses are blackened by a.a.a.a / b.b.b.b / x.x.x.x / y.y.y.y / z.z.z.z):

2016-11-22 18:50:53,007 INFO [master/host1.xxx.de/xxx.xxx.xxx.xxx:16000] client.ZooKeeperRegistry: ClusterId read in ZooKeeper is null

2016-11-22 18:51:59,581 INFO [Thread-70] hdfs.DFSClient: Exception in createBlockOutputStream

java.io.IOException: Got error, status message , ack with firstBadLink as bbb.bbb.bbb.bbb:50010

at org.apache.hadoop.hdfs.protocol.datatransfer.DataTransferProtoUtil.checkBlockOpStatus(DataTransferProtoUtil.java:140)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.createBlockOutputStream(DFSOutputStream.java:1393)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1295)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:463)

2016-11-22 18:51:59,584 INFO [Thread-70] hdfs.DFSClient: Abandoning BP-90489822-xxx.xxx.xxx.xxx-1479836232259:blk_1073741825_1001

2016-11-22 18:51:59,597 INFO [Thread-70] hdfs.DFSClient: Excluding datanode DatanodeInfoWithStorage[bbb.bbb.bbb.bbb:50010,DS-7691d8f6-0c76-4780-9836-85f20f935dd6,DISK]

2016-11-22 18:52:33,674 INFO [Thread-70] hdfs.DFSClient: Exception in createBlockOutputStream

java.io.IOException: Got error, status message , ack with firstBadLink as zzz.zzz.zzz.zzz:50010

at org.apache.hadoop.hdfs.protocol.datatransfer.DataTransferProtoUtil.checkBlockOpStatus(DataTransferProtoUtil.java:140)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.createBlockOutputStream(DFSOutputStream.java:1393)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1295)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:463)

2016-11-22 18:52:33,675 INFO [Thread-70] hdfs.DFSClient: Abandoning BP-90489822-xxx.xxx.xxx.xxx-1479836232259:blk_1073741837_1013

2016-11-22 18:52:33,683 INFO [Thread-70] hdfs.DFSClient: Excluding datanode DatanodeInfoWithStorage[zzz.zzz.zzz.zzz:50010,DS-15d2586e-09a9-41ed-898d-689e15cd6596,DISK]

2016-11-22 18:52:33,771 WARN [host1:16000.activeMasterManager] hdfs.DFSClient: Slow waitForAckedSeqno took 100584ms (threshold=30000ms)

2016-11-22 18:52:33,797 INFO [host1:16000.activeMasterManager] util.FSUtils: Created version file at hdfs://host1.xxx.de:8020/apps/hbase/data with version=8

2016-11-22 18:52:36,820 INFO [Thread-76] hdfs.DFSClient: Exception in createBlockOutputStream

java.io.IOException: Got error, status message , ack with firstBadLink as yyy.yyy.yyy.yyy:50010

at org.apache.hadoop.hdfs.protocol.datatransfer.DataTransferProtoUtil.checkBlockOpStatus(DataTransferProtoUtil.java:140)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.createBlockOutputStream(DFSOutputStream.java:1393)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1295)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:463)

2016-11-22 18:52:36,821 INFO [Thread-76] hdfs.DFSClient: Abandoning BP-90489822-xxx.xxx.xxx.xxx-1479836232259:blk_1073741843_1019

2016-11-22 18:52:36,828 INFO [Thread-76] hdfs.DFSClient: Excluding datanode DatanodeInfoWithStorage[yyy.yyy.yyy.yyy:50010,DS-439b87d1-f08d-464c-b0e2-728987cd211d,DISK]

2016-11-22 18:52:37,567 INFO [Thread-76] hdfs.DFSClient: Exception in createBlockOutputStream

java.io.IOException: Got error, status message , ack with firstBadLink as zzz.zzz.zzz.zzz:50010

at org.apache.hadoop.hdfs.protocol.datatransfer.DataTransferProtoUtil.checkBlockOpStatus(DataTransferProtoUtil.java:140)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.createBlockOutputStream(DFSOutputStream.java:1393)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1295)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:463)

2016-11-22 18:52:37,567 INFO [Thread-76] hdfs.DFSClient: Abandoning BP-90489822-xxx.xxx.xxx.xxx-1479836232259:blk_1073741845_1021

2016-11-22 18:52:37,575 INFO [Thread-76] hdfs.DFSClient: Excluding datanode DatanodeInfoWithStorage[zzz.zzz.zzz.zzz:50010,DS-15d2586e-09a9-41ed-898d-689e15cd6596,DISK]

2016-11-22 18:52:40,589 INFO [Thread-76] hdfs.DFSClient: Exception in createBlockOutputStream

java.io.IOException: Got error, status message , ack with firstBadLink as aaa.aaa.aaa.aaa:50010

at org.apache.hadoop.hdfs.protocol.datatransfer.DataTransferProtoUtil.checkBlockOpStatus(DataTransferProtoUtil.java:140)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.createBlockOutputStream(DFSOutputStream.java:1393)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1295)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:463)

2016-11-22 18:52:40,589 INFO [Thread-76] hdfs.DFSClient: Abandoning BP-90489822-xxx.xxx.xxx.xxx-1479836232259:blk_1073741846_1022

2016-11-22 18:52:40,593 INFO [Thread-76] hdfs.DFSClient: Excluding datanode DatanodeInfoWithStorage[aaa.aaa.aaa.aaa:50010,DS-15ea9223-2b1b-4f86-8797-ff0e2aaa6787,DISK]

2016-11-22 18:52:40,694 INFO [host1:16000.activeMasterManager] master.MasterFileSystem: BOOTSTRAP: creating hbase:meta region

2016-11-22 18:52:40,699 INFO [host1:16000.activeMasterManager] regionserver.HRegion: creating HRegion hbase:meta HTD == 'hbase:meta', {TABLE_ATTRIBUTES => {IS_META => 'true', coprocessor$1 => '|org.apache.hadoop.hbase.coprocessor.Mul$

2016-11-22 18:52:43,741 INFO [Thread-79] hdfs.DFSClient: Exception in createBlockOutputStream

java.io.IOException: Got error, status message , ack with firstBadLink as yyy.yyy.yyy.yyy:50010

at org.apache.hadoop.hdfs.protocol.datatransfer.DataTransferProtoUtil.checkBlockOpStatus(DataTransferProtoUtil.java:140)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.createBlockOutputStream(DFSOutputStream.java:1393)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1295)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:463)

2016-11-22 18:52:43,742 INFO [Thread-79] hdfs.DFSClient: Abandoning BP-90489822-xxx.xxx.xxx.xxx-1479836232259:blk_1073741848_1024

2016-11-22 18:52:43,744 INFO [Thread-79] hdfs.DFSClient: Excluding datanode DatanodeInfoWithStorage[yyy.yyy.yyy.yyy:50010,DS-fc167096-246b-4215-b344-be786d98c472,DISK]

2016-11-22 18:52:46,760 INFO [Thread-79] hdfs.DFSClient: Exception in createBlockOutputStream

java.io.IOException: Got error, status message , ack with firstBadLink as zzz.zzz.zzz.zzz:50010

at org.apache.hadoop.hdfs.protocol.datatransfer.DataTransferProtoUtil.checkBlockOpStatus(DataTransferProtoUtil.java:140)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.createBlockOutputStream(DFSOutputStream.java:1393)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1295)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:463)

2016-11-22 18:52:46,760 INFO [Thread-79] hdfs.DFSClient: Abandoning BP-90489822-xxx.xxx.xxx.xxx-1479836232259:blk_1073741849_1025

2016-11-22 18:52:46,766 INFO [Thread-79] hdfs.DFSClient: Excluding datanode DatanodeInfoWithStorage[zzz.zzz.zzz.zzz:50010,DS-c1dc059d-8f0b-4971-88cc-ebc76dd8659a,DISK]

Can someone give a clue why these (and only these) two services are stopping after a few minutes?