I have the following dataframe:

df = pd.DataFrame([

(1, 1, 'term1'),

(1, 2, 'term2'),

(1, 1, 'term1'),

(1, 1, 'term2'),

(2, 2, 'term3'),

(2, 3, 'term1'),

(2, 2, 'term1')

], columns=['id', 'group', 'term'])

I want to group it by id and group and calculate the number of each term for this id, group pair.

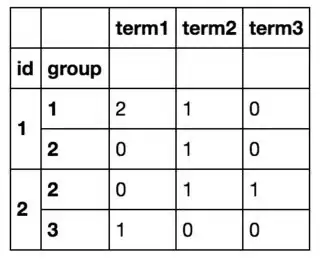

So in the end I am going to get something like this:

I was able to achieve what I want by looping over all the rows with df.iterrows() and creating a new dataframe, but this is clearly inefficient. (If it helps, I know the list of all terms beforehand and there are ~10 of them).

It looks like I have to group by and then count values, so I tried that with df.groupby(['id', 'group']).value_counts() which does not work because value_counts operates on the groupby series and not a dataframe.

Anyway I can achieve this without looping?