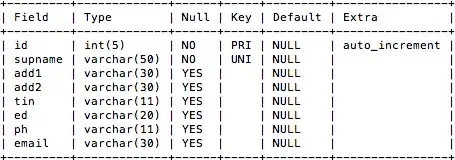

Hi I have two numpy arrays (in this case representing depth and percentage depth dose data) as follows:

depth = np.array([ 0. , 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1. ,

1.1, 1.2, 1.3, 1.4, 1.5, 1.6, 1.7, 1.8, 1.9, 2. , 2.2,

2.4, 2.6, 2.8, 3. , 3.5, 4. , 4.5, 5. , 5.5])

pdd = np.array([ 80.40649399, 80.35692155, 81.94323956, 83.78981286,

85.58681373, 87.47056637, 89.39149833, 91.33721651,

93.35729334, 95.25343909, 97.06283306, 98.53761309,

99.56624117, 100. , 99.62820672, 98.47564754,

96.33163961, 93.12182427, 89.0940637 , 83.82699219,

77.75436857, 63.15528566, 46.62287768, 29.9665386 ,

16.11104226, 6.92774817, 0.69401413, 0.58247614,

0.55768992, 0.53290371, 0.5205106 ])

which when plotted give the following curve:

I need to find the depth at which the pdd falls to a given value (initially 50%). I have tried slicing the arrays at the point where the pdd reaches 100% as I'm only interested in the points after this.

Unfortunately np.interp only appears to work where both x and y values are incresing.

Could anyone suggest where I should go next?