Well, I am new to mongo and today morning I had a (bad) idea. I was playing around with indexes from the shell and decided to create a large collection with many documents (100 million). So I executed the following command:

for (i = 1; i <= 100; i++) {

for (j = 100; j > 0; j--) {

for (k = 1; k <= 100; k++) {

for (l = 100; l > 0; l--) {

db.testIndexes.insert({a:i, b:j, c:k, d:l})

}

}

}

}

However, the things didn't go as I expected:

- It took 45 minutes complete the request.

- It created 16 GB data on my hard disk.

- It used 80% of my RAM (8GB total) and it won't release them till I restarted my PC.

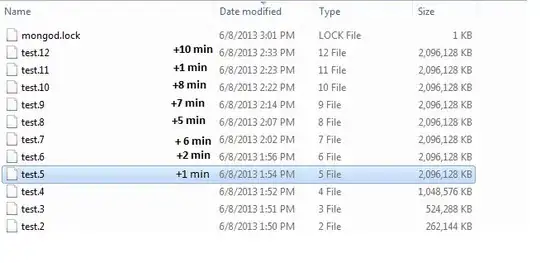

As you can see in the photo below, as the number of documents inside the collection was growing, the time of the insertion of documents was growing as well. I suggest that by the last modification time of the data files:

Is this an expected behavior? I don't think that 100 million simple documents are too much.

P.S. I am now really afraid to run an ensureIndex command.

Edit:

I executed the following command:

> db.testIndexes.stats()

{

"ns" : "test.testIndexes",

"count" : 100000000,

"size" : 7200000056,

"avgObjSize" : 72.00000056,

"storageSize" : 10830266336,

"numExtents" : 28,

"nindexes" : 1,

"lastExtentSize" : 2146426864,

"paddingFactor" : 1,

"systemFlags" : 1,

"userFlags" : 0,

"totalIndexSize" : 3248014112,

"indexSizes" : {

"_id_" : 3248014112

},

"ok" : 1

}

So, the default index on _id has more than 3GB size.