I am learning about attention models, and following along with Jay Alammar's amazing blog tutorial on The Illustrated Transformer. He gives a great walkthrough for how the attention scores are calculated, but I get a bit lost at a certain point, and am not seeing how the attention score Z matrix he explains is used to interpret strength of associations between different words within an input sequence.

He mentions that given some input matrix X, with shape N x D, where N is the number of elements in an input sequence, and D is the input dimensionality, we multiply X with three separate weight matrices of shape D x d, where d is some lower dimensionality that represents the projected space of the query, key, and value matrices:

The query and key matrices are dotted, and then divided by a scaling factor usually the square root of the projected dimensionality, and then run through a softmax function. This produces a weight matrix of size N x N, which is multiplied by the value matrix to get an output Z of shape N x d, which Jay says

That concludes the self-attention calculation. The resulting vector is one we can send along to the feed-forward neural network.

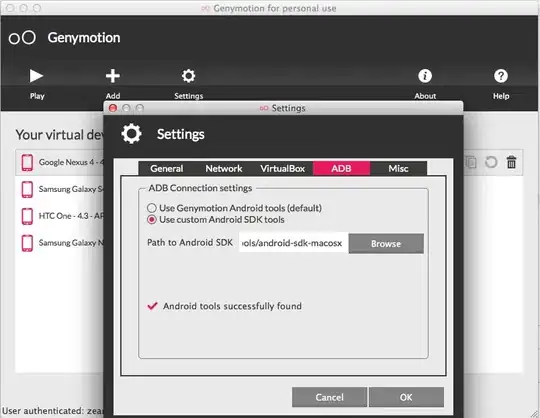

The screenshot from his blog for this calculation is below:

However, this is where I'm confused.

However, this is where I'm confused. Z is N x d. However, I don't particularly understand what I'm supposed to do with this matrix from an interpretability sense, and as far as I understand, for a particular sequence element (ie. the word cats in the sequence I love pets, especially cats), self-attention is supposed to score other parts of the sequence high when it is relevant or strong associated with that word embedding. However, I'd expect then that Z is N x N, so I could say that I can select the Z[i,j] and say for the i-th word in the sequence, the j-th word relates or associates with it this or that much.

In fact, wouldn't it make much more sense to use only the softmax output of the weights (without multiplying them by the value matrix), since it already is N x N? In essence, how is Jay determining the strength of these associations in this particular sequence with the word it?

This is an N by 1 relationship he is showing - there are N values that correspond with the strength of association to the word it.