Filtering is one of those algorithms. Bandpass, lowpass, or highpass - all of them require looking to the last few samples generated in order to compute the result. This can't be done because those samples haven't been generated yet.

That's not right. IIR filters do need previous results, but FIR filters only need previous input; that is pretty typical for the things that GPUs were designed to do, so it's not likely a problem to let every processing core access let's say 64 input samples to produce one output sample -- in fact, the cache architectures that Nvidia and AMD use lend themselves to that.

Is a constant-time algorithm achievable? If not, can it be proven that it isn't?

It is! In two aspects:

- as mentioned above, FIR filters only need multiple samples of immutable input, so they can be parallelized heavily without problems, and

- even if you need to calculate your input first, and would like to parallelize that (I don't see a reason for that -- generating a sawtooth is not CPU-limited, but memory bandwidth limited), every core could simply calculate the last N samples -- sure, there's N-1 redundant operations, but as long as your number of cores is much bigger than your N, you will still be faster, and every core will have constant run time.

Comments on your approach:

I'm looking into the feasibility of GPU synthesized audio, where each thread renders a sample.

From a higher-up perspective, that sounds too fine-granular. I mean, let's say you have 3000 stream processors (high-end consumer GPU). Assuming you have a sampling rate of 44.1kHz, and assuming each of these processors does only one sample, letting them all run once only gives you 1/14.7 of a second of audio (mono). Then you'd have to move on to the next part of audio.

In other words: There's bound to be much much more samples than processors. In these situations, it's typically way more efficient to let one processor handle a sequence of samples; for example, if you want to generate 30s of audio, that'd be 1.323MS (amples). Simply splitting the problem into 3000 chunks, one for each processor, and giving each of them the 44100*30/3000=441 samples they should process plus 64 samples of "history" before the first of their "own" samples will still easily fit into local memory.

Yet another thought:

I'm coming from a software defined radio background, where there's usually millions of samples per second, rather than a few kHz of sampling rate, in real time (i.e. processing speed > sampling rate). Still, doing computation on the GPU only pays for the more CPU-intense tasks, because there's significant overhead in exchanging data with the GPU, and CPUs nowadays are blazingly fast. So, for your relatively simple problem, it might never work faster to do things on the GPU compared to optimizing them on the CPU; things of course look different if you've got to process lots of samples, or a lot of streams, at once. For finer-granular tasks, the problem of filling a buffer, moving it to the GPU, and getting the result buffer back into your software usually kills the advantage.

Hence, I'd like to challenge you: Download the GNU Radio live DVD, burn it to a DVD or write it to a USB stick (you might as well run it in a VM, but that of course reduces performance if you don't know how to optimize your virtualizer; really - try it from a live medium), run

volk_profile

to let the VOLK library test which algorithms work best on your specific machine, and then launch

gnuradio-companion

And then, run open the following two signal processing flow graphs:

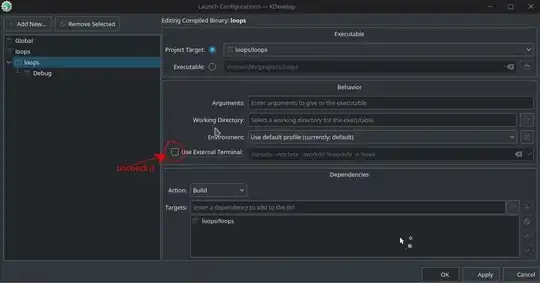

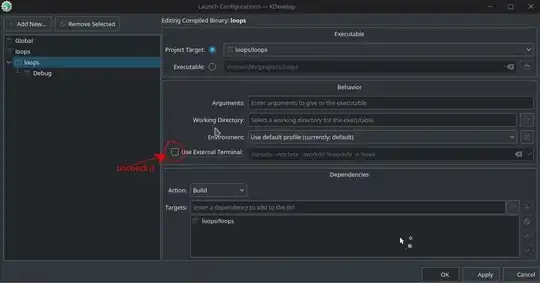

- "classical FIR":

This single-threaded implementation of the FIR filter yields about 50MSamples/s on my CPU.

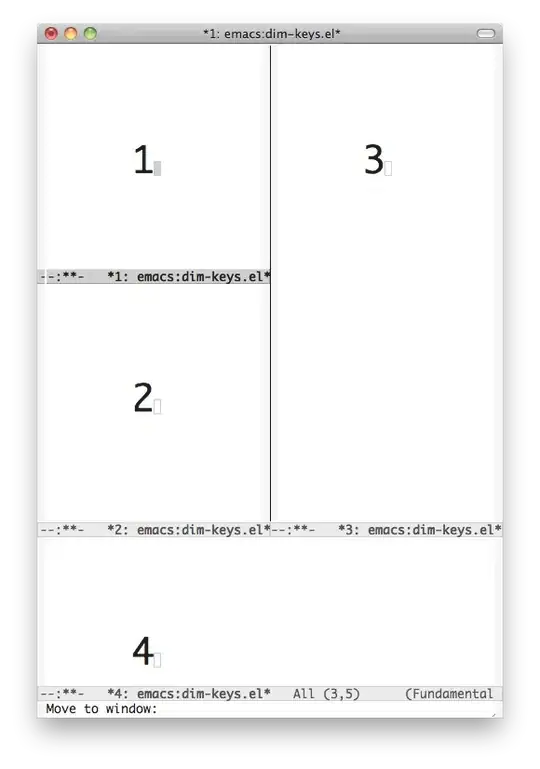

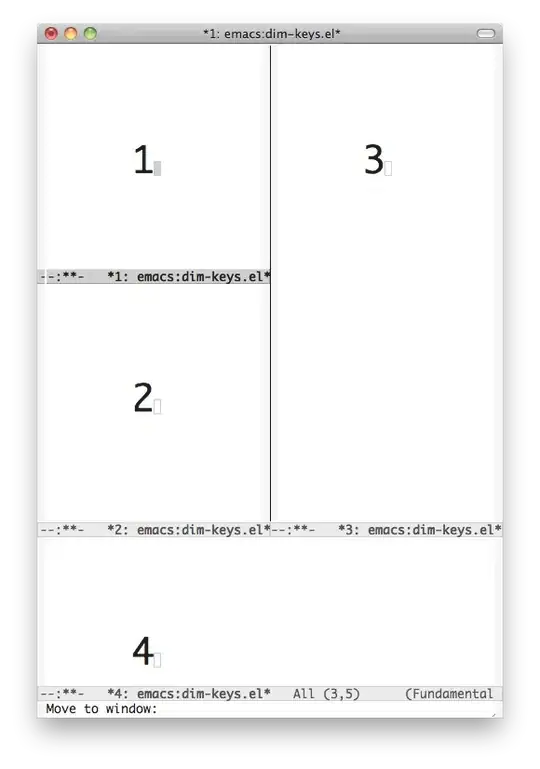

- FIR Filter implemented with the FFT, running on 4 threads:

This implementation reaches 160MSamples/s (!!) on my CPU alone.

Sure, with the help of FFTs on my GPU, I could be faster, but the thing here is: Even with the "simple" FIR filter, I can, with a single CPU core, get 50 Megasamples out of my machine -- meaning that, with a 44.1kHz audio sampling rate, per single second I can process roughly 19 minutes of audio. No copying in and out of host RAM. No GPU cooler spinning up. It might really not be worth optimizing further. And if you optimize and take the FFT-Filter approach: 160MS/s means roughly one hour of audio per processing second, including sawtooth generation.